|

|

||

|---|---|---|

| .github | ||

| benchmark@9757a13749 | ||

| colossalai | ||

| docker | ||

| docs | ||

| examples@f4751fc792 | ||

| model_zoo | ||

| requirements | ||

| tests | ||

| .flake8 | ||

| .gitignore | ||

| .gitmodules | ||

| .pre-commit-config.yaml | ||

| .readthedocs.yaml | ||

| .style.yapf | ||

| CHANGE_LOG.md | ||

| CONTRIBUTING.md | ||

| LICENSE | ||

| MANIFEST.in | ||

| README-zh-Hans.md | ||

| README.md | ||

| pytest.ini | ||

| setup.py | ||

| version.txt | ||

README.md

Colossal-AI

An integrated large-scale model training system with efficient parallelization techniques.

Paper | Documentation | Examples | Forum | Blog

Table of Contents

Features

Colossal-AI provides a collection of parallel training components for you. We aim to support you to write your distributed deep learning models just like how you write your model on your laptop. We provide user-friendly tools to kickstart distributed training in a few lines.

- Data Parallelism

- Pipeline Parallelism

- 1D, 2D, 2.5D, 3D tensor parallelism

- Sequence parallelism

- Friendly trainer and engine

- Extensible for new parallelism

- Mixed Precision Training

- Zero Redundancy Optimizer (ZeRO)

Demo

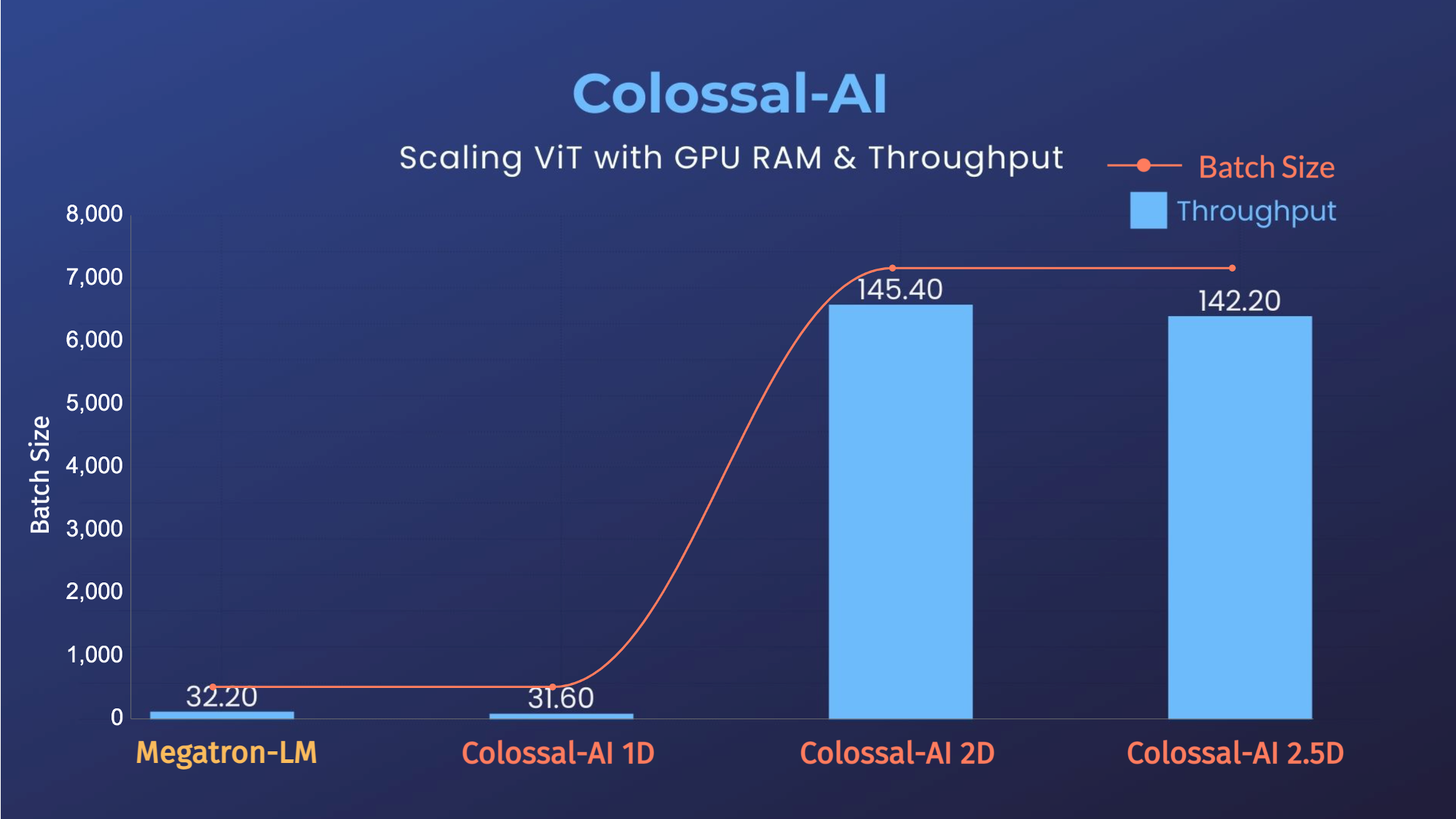

ViT

- 14x larger batch size, and 5x faster training for Tensor Parallelism = 64

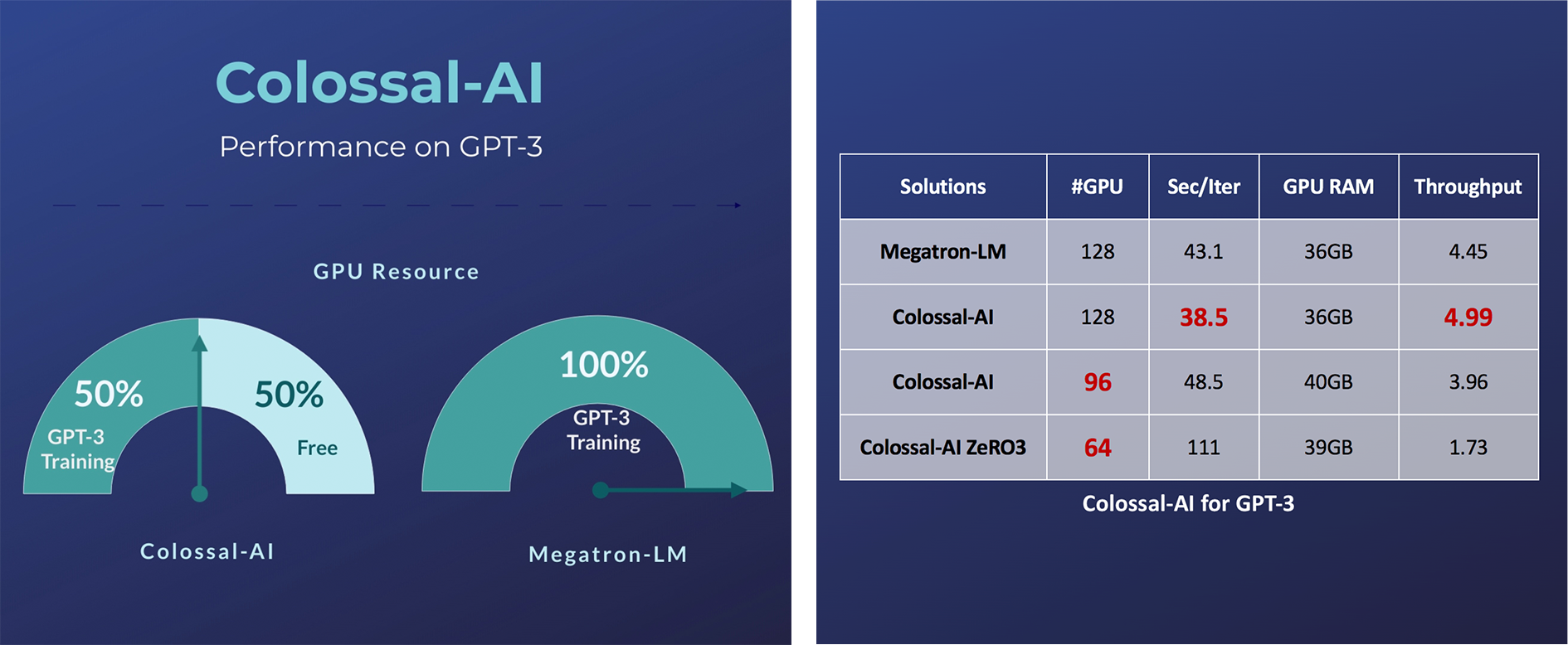

GPT-3

- Save 50% GPU resources, and 10.7% acceleration

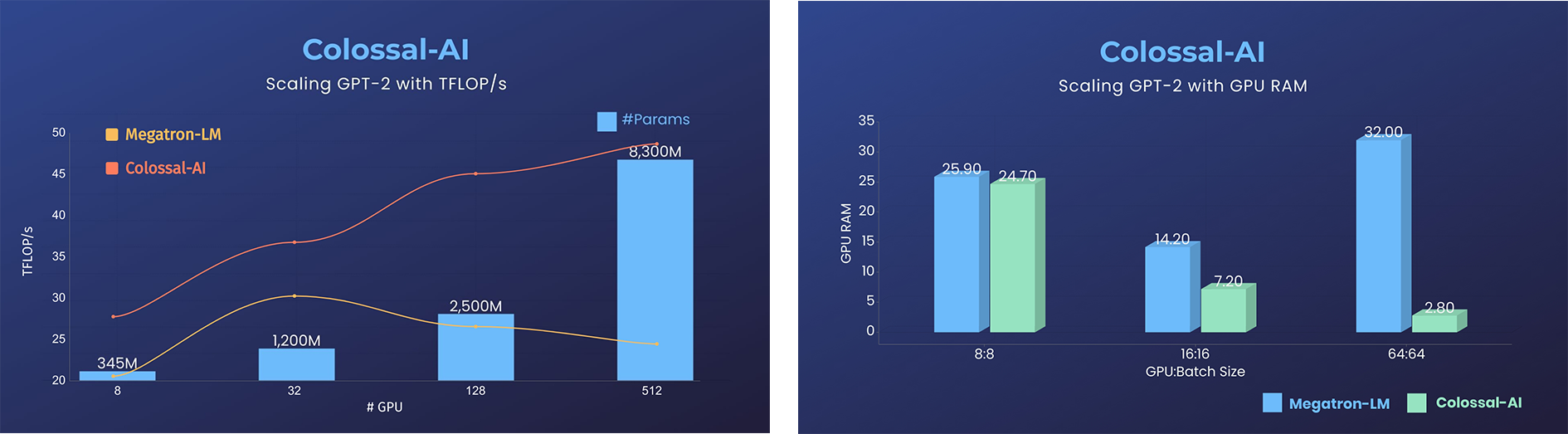

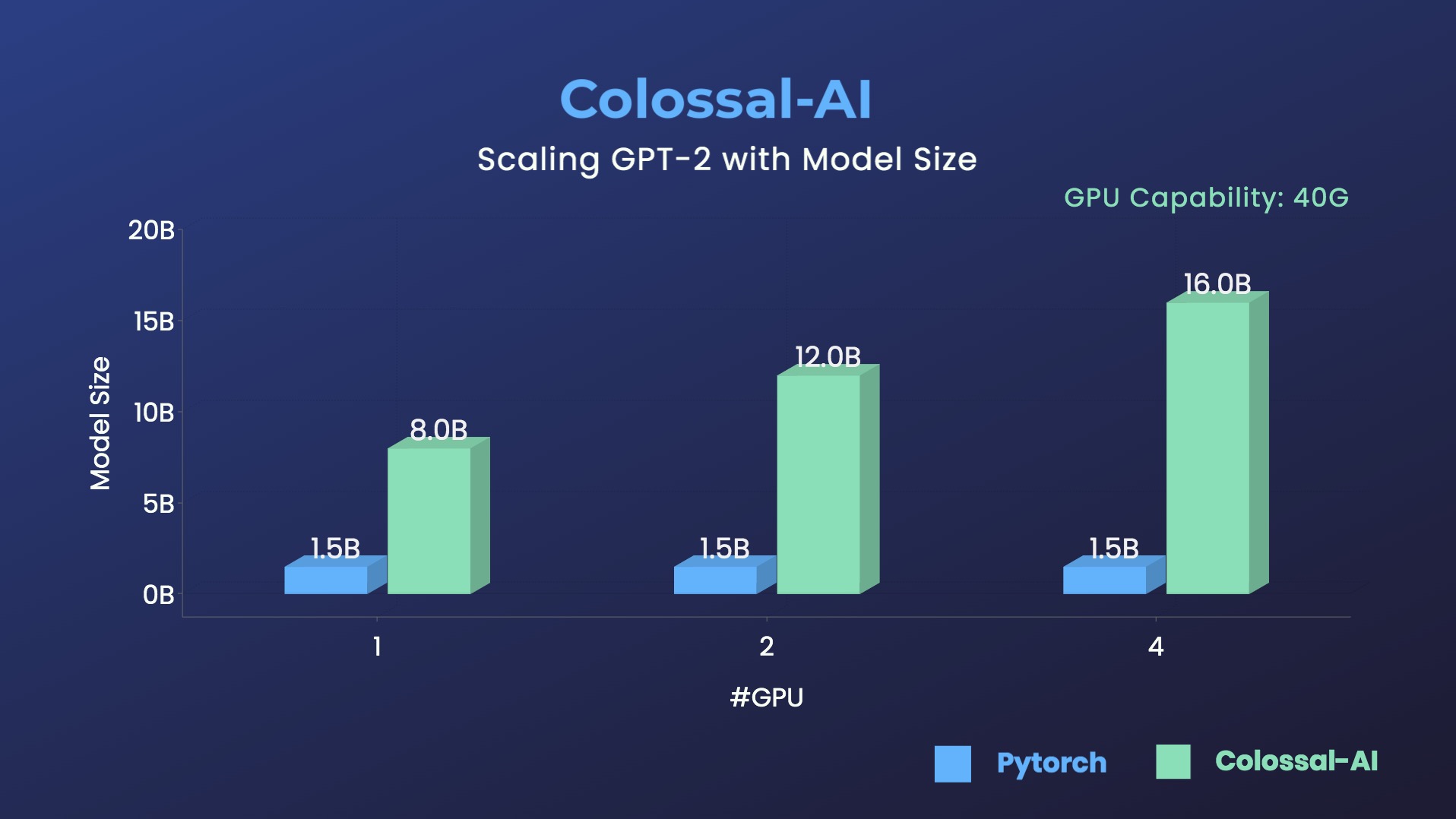

GPT-2

- 11x lower GPU memory consumption, and superlinear scaling efficiency with Tensor Parallelism

- 10.7x larger model size on the same hardware

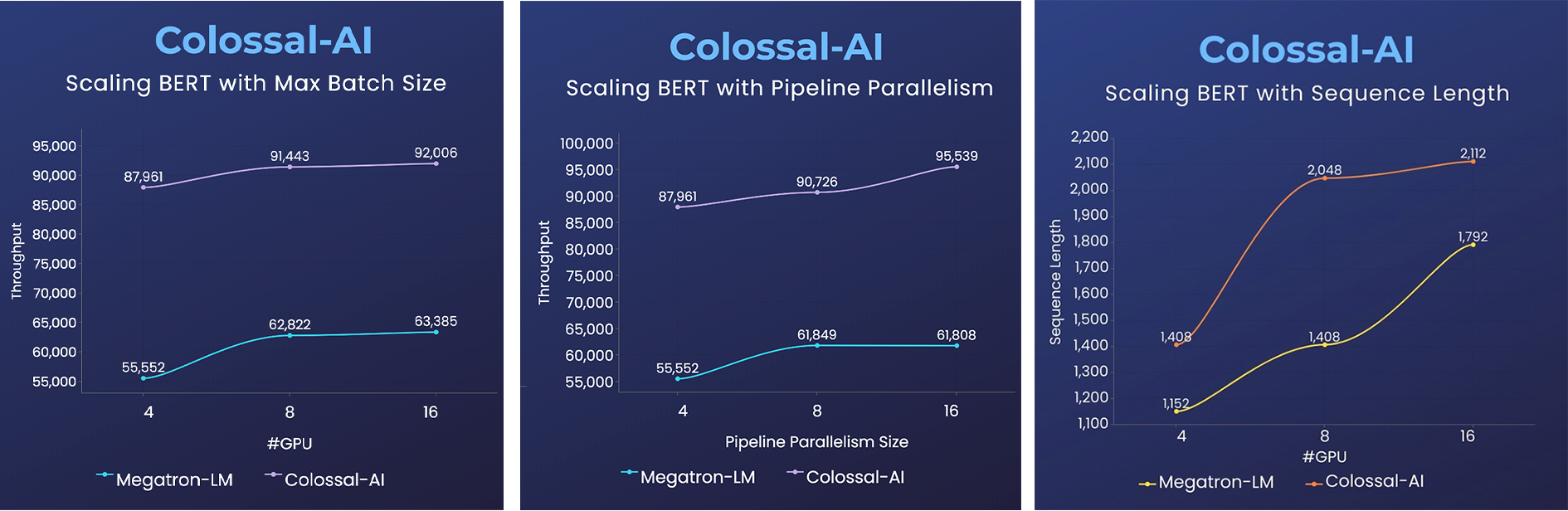

BERT

- 2x faster training, or 50% longer sequence length

Please visit our documentation and tutorials for more details.

Installation

PyPI

pip install colossalai

This command will install CUDA extension if your have installed CUDA, NVCC and torch.

If you don't want to install CUDA extension, you should add --global-option="--no_cuda_ext", like:

pip install colossalai --global-option="--no_cuda_ext"

If you want to use ZeRO, you can run:

pip install colossalai[zero]

Install From Source

The version of Colossal-AI will be in line with the main branch of the repository. Feel free to create an issue if you encounter any problems. :-)

git clone https://github.com/hpcaitech/ColossalAI.git

cd ColossalAI

# install dependency

pip install -r requirements/requirements.txt

# install colossalai

pip install .

If you don't want to install and enable CUDA kernel fusion (compulsory installation when using fused optimizer):

pip install --global-option="--no_cuda_ext" .

Use Docker

Run the following command to build a docker image from Dockerfile provided.

cd ColossalAI

docker build -t colossalai ./docker

Run the following command to start the docker container in interactive mode.

docker run -ti --gpus all --rm --ipc=host colossalai bash

Community

Join the Colossal-AI community on Forum, Slack, and WeChat to share your suggestions, feedback, and questions with our engineering team.

Contributing

If you wish to contribute to this project, please follow the guideline in Contributing.

Thanks so much to all of our amazing contributors!

The order of contributor avatars is randomly shuffled.

Quick View

Start Distributed Training in Lines

import colossalai

from colossalai.utils import get_dataloader

# my_config can be path to config file or a dictionary obj

# 'localhost' is only for single node, you need to specify

# the node name if using multiple nodes

colossalai.launch(

config=my_config,

rank=rank,

world_size=world_size,

backend='nccl',

port=29500,

host='localhost'

)

# build your model

model = ...

# build you dataset, the dataloader will have distributed data

# sampler by default

train_dataset = ...

train_dataloader = get_dataloader(dataset=dataset,

shuffle=True

)

# build your optimizer

optimizer = ...

# build your loss function

criterion = ...

# initialize colossalai

engine, train_dataloader, _, _ = colossalai.initialize(

model=model,

optimizer=optimizer,

criterion=criterion,

train_dataloader=train_dataloader

)

# start training

engine.train()

for epoch in range(NUM_EPOCHS):

for data, label in train_dataloader:

engine.zero_grad()

output = engine(data)

loss = engine.criterion(output, label)

engine.backward(loss)

engine.step()

Write a Simple 2D Parallel Model

Let's say we have a huge MLP model and its very large hidden size makes it difficult to fit into a single GPU. We can then distribute the model weights across GPUs in a 2D mesh while you still write your model in a familiar way.

from colossalai.nn import Linear2D

import torch.nn as nn

class MLP_2D(nn.Module):

def __init__(self):

super().__init__()

self.linear_1 = Linear2D(in_features=1024, out_features=16384)

self.linear_2 = Linear2D(in_features=16384, out_features=1024)

def forward(self, x):

x = self.linear_1(x)

x = self.linear_2(x)

return x

Cite Us

@article{bian2021colossal,

title={Colossal-AI: A Unified Deep Learning System For Large-Scale Parallel Training},

author={Bian, Zhengda and Liu, Hongxin and Wang, Boxiang and Huang, Haichen and Li, Yongbin and Wang, Chuanrui and Cui, Fan and You, Yang},

journal={arXiv preprint arXiv:2110.14883},

year={2021}

}