diff --git a/README.md b/README.md

index 3d56bdd..90ef9d9 100644

--- a/README.md

+++ b/README.md

@@ -58,22 +58,24 @@ InternLM2 series are released with the following features:

| Model | Transformers(HF) | ModelScope(HF) | OpenXLab(HF) | Release Date |

|---------------------------|------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------|--------------|

-| **InternLM2 Chat 20B** | [🤗internlm/internlm2-chat-20b](https://huggingface.co/internlm/internlm2-chat-20b) | [ internlm2-chat-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b) | 2024-01-17 |

-| **InternLM2 20B** | [🤗internlm/internlm2-20b](https://huggingface.co/internlm/internlm2-20b) | [ internlm2-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-20b) | 2024-01-17 |

-| **InternLM2 Chat 20B SFT** | [🤗internlm/internlm2-chat-20b-sft](https://huggingface.co/internlm/internlm2-chat-20b-sft) | [ internlm2-chat-20b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b-sft) | 2024-01-17 |

-| **InternLM2 Base 20B** | [🤗internlm/internlm2-base-20b](https://huggingface.co/internlm/internlm2-base-20b) | [ internlm2-base-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-20b) | 2024-01-17 |

-| **InternLM2 Chat 7B** | [🤗internlm/internlm2-chat-7b](https://huggingface.co/internlm/internlm2-chat-7b) | [ internlm2-chat-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b) | 2024-01-17 |

-| **InternLM2 7B** | [🤗internlm/internlm2-7b](https://huggingface.co/internlm/internlm2-7b) | [ internlm2-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-7b) | 2024-01-17 |

-| **InternLM2 Chat 7B SFT** | [🤗internlm/internlm2-chat-7b-sft](https://huggingface.co/internlm/internlm2-chat-7b-sft) | [ internlm2-chat-7b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b-sft) | 2024-01-17 |

-| **InternLM2 Base 7B** | [🤗internlm/internlm2-base-7b](https://huggingface.co/internlm/internlm2-base-7b) | [ internlm2-base-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-7b) | 2024-01-17 |

+| **InternLM2-Base-7B** | [🤗internlm/internlm2-base-7b](https://huggingface.co/internlm/internlm2-base-7b) | [ internlm2-base-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-7b) | 2024-01-17 |

+| **InternLM2-7B** | [🤗internlm/internlm2-7b](https://huggingface.co/internlm/internlm2-7b) | [ internlm2-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-7b) | 2024-01-17 |

+| **InternLM2-Chat-7B-SFT** | [🤗internlm/internlm2-chat-7b-sft](https://huggingface.co/internlm/internlm2-chat-7b-sft) | [ internlm2-chat-7b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b-sft) | 2024-01-17 |

+| **InternLM2-Chat-7B** | [🤗internlm/internlm2-chat-7b](https://huggingface.co/internlm/internlm2-chat-7b) | [ internlm2-chat-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b) | 2024-01-17 |

+| **InternLM2-Base-20B** | [🤗internlm/internlm2-base-20b](https://huggingface.co/internlm/internlm2-base-20b) | [ internlm2-base-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-20b) | 2024-01-17 |

+| **InternLM2-20B** | [🤗internlm/internlm2-20b](https://huggingface.co/internlm/internlm2-20b) | [ internlm2-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-20b) | 2024-01-17 |

+| **InternLM2-Chat-20B-SFT** | [🤗internlm/internlm2-chat-20b-sft](https://huggingface.co/internlm/internlm2-chat-20b-sft) | [ internlm2-chat-20b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b-sft) | 2024-01-17 |

+| **InternLM2-Chat-20B** | [🤗internlm/internlm2-chat-20b](https://huggingface.co/internlm/internlm2-chat-20b) | [ internlm2-chat-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b) | 2024-01-17 |

+

**Note of Models:**

-The release of InternLM2 series contains two model sizes: 7B and 20B. 7B models are efficient for research and application and 20B models are more powerful and can support more complex scenarios. For each model size, there are three types of models for different user requirements

+The release of InternLM2 series contains two model sizes: 7B and 20B. 7B models are efficient for research and application and 20B models are more powerful and can support more complex scenarios. For each model size, there are four types of models for different user requirements

1. InternLM2-Base: Foundation models with high quality and high adaptation flexibility, which serve as a good starting point for downstream deep adaptations.

2. InternLM2: Optimized in multiple dimensions based on InternLM2-Base, obtaining state-of-the-art performance in evaluation with good language capability. InternLM2 models are recommended for consideration in most applications.

-3. InternLM2-Chat: InternLM2-Chat have gone through SFT and online RLHF based on InternLM2-Base model, for better instruction following, chat experience and function calling, which is recommended for downstream applications. We also released their corresponding SFT version, termed InternLM2 Chat 7/20B SFT, to ease the research for alignment.

+3. InternLM2-Chat-SFT: Based on the InternLM2-Base model, it undergoes supervised human alignment training.

+3. InternLM2-Chat: Optimized for conversational interaction on top of the InternLM2-Chat-SFT through RLHF, it excels in instruction adherence, empathetic chatting, and tool invocation, for better instruction following, chat experience and function calling, which is recommended for downstream applications.

**Limitations:** Although we have made efforts to ensure the safety of the model during the training process and to encourage the model to generate text that complies with ethical and legal requirements, the model may still produce unexpected outputs due to its size and probabilistic generation paradigm. For example, the generated responses may contain biases, discrimination, or other harmful content. Please do not propagate such content. We are not responsible for any consequences resulting from the dissemination of harmful information.

@@ -126,30 +128,33 @@ The chat models adopt [chatml format](./chat/chat_format.md) to support both cha

### Import from Transformers

-To load the InternLM2 7B Chat model using Transformers, use the following code:

+To load the InternLM2-7B-Chat model using Transformers, use the following code:

```python

->>> from transformers import AutoTokenizer, AutoModelForCausalLM

->>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True)

->>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True).cuda()

->>> model = model.eval()

->>> response, history = model.chat(tokenizer, "hello", history=[])

->>> print(response)

-Hello! How can I help you today?

->>> response, history = model.chat(tokenizer, "please provide three suggestions about time management", history=history)

->>> print(response)

+import torch

+from transformers import AutoTokenizer, AutoModelForCausalLM

+tokenizer = AutoTokenizer.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True)

+# Set `torch_dtype=torch.float16` to load model in float16, otherwise it will be loaded as float32 and might cause OOM Error.

+model = AutoModelForCausalLM.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True, torch_dtype=torch.float16).cuda()

+model = model.eval()

+response, history = model.chat(tokenizer, "hello", history=[])

+print(response)

+# Output: Hello? How can I help you today?

+response, history = model.chat(tokenizer, "please provide three suggestions about time management", history=history)

+print(response)

```

### Import from ModelScope

-To load the InternLM model using ModelScope, use the following code:

+To load the InternLM2-7B-Chat model using ModelScope, use the following code:

```python

-from modelscope import snapshot_download, AutoTokenizer, AutoModelForCausalLM

import torch

+from modelscope import snapshot_download, AutoTokenizer, AutoModelForCausalLM

model_dir = snapshot_download('Shanghai_AI_Laboratory/internlm2-chat-7b')

-tokenizer = AutoTokenizer.from_pretrained(model_dir, device_map="auto", trust_remote_code=True,torch_dtype=torch.float16)

-model = AutoModelForCausalLM.from_pretrained(model_dir,device_map="auto", trust_remote_code=True,torch_dtype=torch.float16)

+tokenizer = AutoTokenizer.from_pretrained(model_dir, device_map="auto", trust_remote_code=True)

+# Set `torch_dtype=torch.float16` to load model in float16, otherwise it will be loaded as float32 and might cause OOM Error.

+model = AutoModelForCausalLM.from_pretrained(model_dir, device_map="auto", trust_remote_code=True, torch_dtype=torch.float16)

model = model.eval()

response, history = model.chat(tokenizer, "hello", history=[])

print(response)

@@ -192,7 +197,6 @@ Please refer to [finetune docs](./finetune/) for fine-tuning with InternLM.

**Note:** We have migrated the whole training functionality in this project to [InternEvo](https://github.com/InternLM/InternEvo) for easier user experience, which provides efficient pre-training and fine-tuning infra for training InternLM.

-

## Evaluation

We utilize [OpenCompass](https://github.com/open-compass/opencompass) for model evaluation. In InternLM-2, we primarily focus on standard objective evaluation, long-context evaluation (needle in a haystack), data contamination assessment, agent evaluation, and subjective evaluation.

diff --git a/README_zh-CN.md b/README_zh-CN.md

index c8fb4f2..7ed44c6 100644

--- a/README_zh-CN.md

+++ b/README_zh-CN.md

@@ -56,22 +56,23 @@ InternLM2 系列模型在本仓库正式发布,具有如下特性:

| Model | Transformers(HF) | ModelScope(HF) | OpenXLab(HF) | Release Date |

|---------------------------|------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------|-------------------------------------------------------------------------------------------------------------------------------------------------------------|--------------|

-| **InternLM2 Chat 20B** | [🤗internlm/internlm2-chat-20b](https://huggingface.co/internlm/internlm2-chat-20b) | [ internlm2-chat-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b) | 2024-01-17 |

-| **InternLM2 20B** | [🤗internlm/internlm2-20b](https://huggingface.co/internlm/internlm2-20b) | [ internlm2-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-20b) | 2024-01-17 |

-| **InternLM2 Chat 20B SFT** | [🤗internlm/internlm2-chat-20b-sft](https://huggingface.co/internlm/internlm2-chat-20b-sft) | [ internlm2-chat-20b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b-sft) | 2024-01-17 |

-| **InternLM2 Base 20B** | [🤗internlm/internlm2-base-20b](https://huggingface.co/internlm/internlm2-base-20b) | [ internlm2-base-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-20b) | 2024-01-17 |

-| **InternLM2 Chat 7B** | [🤗internlm/internlm2-chat-7b](https://huggingface.co/internlm/internlm2-chat-7b) | [ internlm2-chat-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b) | 2024-01-17 |

-| **InternLM2 7B** | [🤗internlm/internlm2-7b](https://huggingface.co/internlm/internlm2-7b) | [ internlm2-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-7b) | 2024-01-17 |

-| **InternLM2 Chat 7B SFT** | [🤗internlm/internlm2-chat-7b-sft](https://huggingface.co/internlm/internlm2-chat-7b-sft) | [ internlm2-chat-7b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b-sft) | 2024-01-17 |

-| **InternLM2 Base 7B** | [🤗internlm/internlm2-base-7b](https://huggingface.co/internlm/internlm2-base-7b) | [ internlm2-base-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-7b) | 2024-01-17 |

+| **InternLM2-Base-7B** | [🤗internlm/internlm2-base-7b](https://huggingface.co/internlm/internlm2-base-7b) | [ internlm2-base-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-7b) | 2024-01-17 |

+| **InternLM2-7B** | [🤗internlm/internlm2-7b](https://huggingface.co/internlm/internlm2-7b) | [ internlm2-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-7b) | 2024-01-17 |

+| **InternLM2-Chat-7B-SFT** | [🤗internlm/internlm2-chat-7b-sft](https://huggingface.co/internlm/internlm2-chat-7b-sft) | [ internlm2-chat-7b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b-sft) | 2024-01-17 |

+| **InternLM2-Chat-7B** | [🤗internlm/internlm2-chat-7b](https://huggingface.co/internlm/internlm2-chat-7b) | [ internlm2-chat-7b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-7b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-7b) | 2024-01-17 |

+| **InternLM2-Base-20B** | [🤗internlm/internlm2-base-20b](https://huggingface.co/internlm/internlm2-base-20b) | [ internlm2-base-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-base-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-base-20b) | 2024-01-17 |

+| **InternLM2-20B** | [🤗internlm/internlm2-20b](https://huggingface.co/internlm/internlm2-20b) | [ internlm2-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-20b) | 2024-01-17 |

+| **InternLM2-Chat-20B-SFT** | [🤗internlm/internlm2-chat-20b-sft](https://huggingface.co/internlm/internlm2-chat-20b-sft) | [ internlm2-chat-20b-sft](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b-sft/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b-sft) | 2024-01-17 |

+| **InternLM2-Chat-20B** | [🤗internlm/internlm2-chat-20b](https://huggingface.co/internlm/internlm2-chat-20b) | [ internlm2-chat-20b](https://modelscope.cn/models/Shanghai_AI_Laboratory/internlm2-chat-20b/summary) | [](https://openxlab.org.cn/models/detail/OpenLMLab/internlm2-chat-20b) | 2024-01-17 |

**关于模型说明:**

-在此次发布中,InternLM2 包含两种模型规格:7B 和 20B。7B 为轻量级的研究和应用提供了一个轻便但性能不俗的模型,20B 模型的综合性能更为强劲,可以有效支持更加复杂的实用场景。面向不同的使用需求,每个规格包含三个模型版本:

+在此次发布中,InternLM2 包含两种模型规格:7B 和 20B。7B 为轻量级的研究和应用提供了一个轻便但性能不俗的模型,20B 模型的综合性能更为强劲,可以有效支持更加复杂的实用场景。面向不同的使用需求,每个规格包含四个模型版本:

1. InternLM2-Base:高质量和具有很强可塑性的模型基座,是模型进行深度领域适配的高质量起点。

2. InternLM2:在 Base 模型基础上,在多个能力方向进行了强化,在评测中成绩优异,同时保持了很好的通用语言能力,是我们推荐的在大部分应用中考虑选用的优秀基座。

-3. InternLM2-Chat:InternLM2-Chat 模型在 InternLM2-Base 模型的基础上,经过了 SFT 和 RLHF,面向对话交互进行了优化,具有较好的指令遵循、共情聊天和调用工具等的能力,是我们推荐直接用于下游应用的模型。我们同时开源了这些模型使用的 SFT 版本方便社区的对齐研究,标记为 InternLM2-Chat 7B/20B SFT。

+3. InternLM2-Chat-SFT: 基于InternLM2-Base模型基座,进行有监督的微调对齐训练。

+3. InternLM2-Chat:在 InternLM2-Chat-SFT 的基础上,通过基于人类反馈的强化学习算法进行了优化,以更好地适应对话交互,并在指令遵循、情感交流和功能调用方面表现出色,从而为下游应用提供更好的指令遵循、聊天体验和功能调用,这是我们推荐的在下游应用中的对话模型选择。

**局限性:** 尽管在训练过程中我们非常注重模型的安全性,尽力促使模型输出符合伦理和法律要求的文本,但受限于模型大小以及概率生成范式,模型可能会产生各种不符合预期的输出,例如回复内容包含偏见、歧视等有害内容,请勿传播这些内容。由于传播不良信息导致的任何后果,本项目不承担责任。

@@ -124,18 +125,20 @@ InternLM2 系列模型在本仓库正式发布,具有如下特性:

### 通过 Transformers 加载

-通过以下的代码从 Transformers 加载 InternLM 模型 (可修改模型名称替换不同的模型)

+通过以下的代码从 Transformers 加载 InternLM2-7B-Chat 模型 (可修改模型名称替换不同的模型)

```python

->>> from transformers import AutoTokenizer, AutoModelForCausalLM

->>> tokenizer = AutoTokenizer.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True)

->>> model = AutoModelForCausalLM.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True).cuda()

->>> model = model.eval()

->>> response, history = model.chat(tokenizer, "你好", history=[])

->>> print(response)

-你好!有什么我可以帮助你的吗?

->>> response, history = model.chat(tokenizer, "请提供三个管理时间的建议。", history=history)

->>> print(response)

+import torch

+from transformers import AutoTokenizer, AutoModelForCausalLM

+tokenizer = AutoTokenizer.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True)

+# 设置`torch_dtype=torch.float16`来将模型精度指定为torch.float16,否则可能会因为您的硬件原因造成显存不足的问题。

+model = AutoModelForCausalLM.from_pretrained("internlm/internlm2-chat-7b", trust_remote_code=True, torch_dtype=torch.float16).cuda()

+model = model.eval()

+response, history = model.chat(tokenizer, "你好", history=[])

+print(response)

+# 模型输出:你好!有什么我可以帮助你的吗?

+response, history = model.chat(tokenizer, "请提供三个管理时间的建议。", history=history)

+print(response)

```

### 通过 ModelScope 加载

@@ -143,11 +146,11 @@ InternLM2 系列模型在本仓库正式发布,具有如下特性:

通过以下的代码从 ModelScope 加载 InternLM 模型 (可修改模型名称替换不同的模型)

```python

-from modelscope import snapshot_download, AutoTokenizer, AutoModelForCausalLM

import torch

+from modelscope import snapshot_download, AutoTokenizer, AutoModelForCausalLM

model_dir = snapshot_download('Shanghai_AI_Laboratory/internlm2-chat-7b')

-tokenizer = AutoTokenizer.from_pretrained(model_dir, device_map="auto", trust_remote_code=True,torch_dtype=torch.float16)

-model = AutoModelForCausalLM.from_pretrained(model_dir,device_map="auto", trust_remote_code=True,torch_dtype=torch.float16)

+tokenizer = AutoTokenizer.from_pretrained(model_dir, device_map="auto", trust_remote_code=True)

+model = AutoModelForCausalLM.from_pretrained(model_dir, device_map="auto", trust_remote_code=True, torch_dtype=torch.float16)

model = model.eval()

response, history = model.chat(tokenizer, "hello", history=[])

print(response)

@@ -165,10 +168,6 @@ pip install transformers==4.30.2

streamlit run ./chat/web_demo.py

```

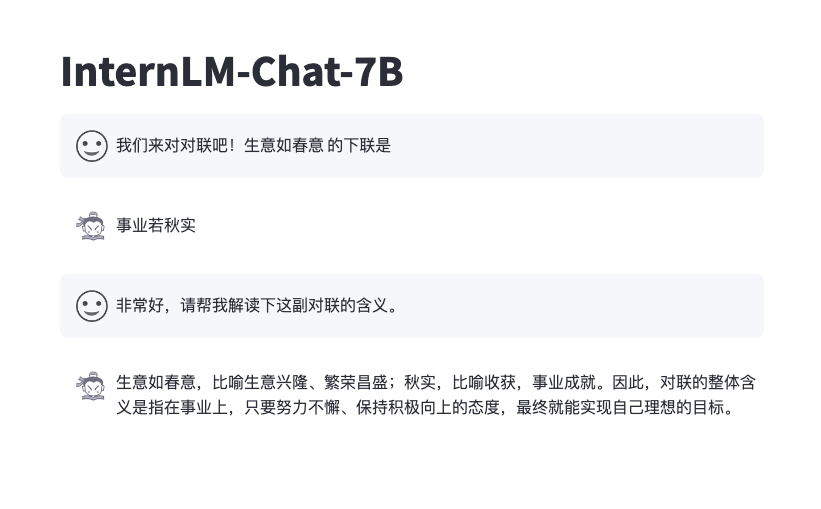

-效果如下

-

-

-

### 基于 InternLM 高性能部署

我们使用 [LMDeploy](https://github.com/InternLM/LMDeploy) 完成 InternLM 的一键部署。

diff --git a/assets/compass_support.svg b/assets/compass_support.svg

index 02d2cc5..9a77df2 100644

--- a/assets/compass_support.svg

+++ b/assets/compass_support.svg

@@ -1 +1 @@

-

\ No newline at end of file

+

diff --git a/assets/license.svg b/assets/license.svg

index 8e072ee..91f9344 100644

--- a/assets/license.svg

+++ b/assets/license.svg

@@ -1 +1 @@

-

\ No newline at end of file

+

diff --git a/assets/logo.svg b/assets/logo.svg

index 0d74a61..a921584 100644

--- a/assets/logo.svg

+++ b/assets/logo.svg

@@ -20,4 +20,4 @@

-

\ No newline at end of file

+

diff --git a/chat/openaoe.md b/chat/openaoe.md

index 6038b44..056e59e 100644

--- a/chat/openaoe.md

+++ b/chat/openaoe.md

@@ -12,10 +12,10 @@ Currently already supported LLMs: [InternLM2-Chat-7B](https://huggingface.co/int

We provide three different ways to run OpenAOE: `run by pip`, `run by docker` and `run by source code` as well.

-### Run by pip

+### Run by pip

#### **Install**

```shell

-pip install -U openaoe

+pip install -U openaoe

```

#### **Start**

```shell

@@ -65,7 +65,7 @@ python -m main -f /path/to/your/config-template.yaml

```

> [!TIP]

-> `/path/to/your/config.yaml` is the configuration file loaded by OpenAOE at startup,

+> `/path/to/your/config.yaml` is the configuration file loaded by OpenAOE at startup,

> which contains the relevant configuration information for the LLMs,

> including: API URLs, AKSKs, Tokens, etc.

> A template configuration yaml file can be found in `openaoe/backend/config/config.yaml`.

diff --git a/chat/openaoe_zh_cn.md b/chat/openaoe_zh_cn.md

index 7a9fc83..3640838 100644

--- a/chat/openaoe_zh_cn.md

+++ b/chat/openaoe_zh_cn.md

@@ -17,7 +17,7 @@

> 需要 python >= 3.9

#### **安装**

```shell

-pip install -U openaoe

+pip install -U openaoe

```

#### **运行**

```shell

@@ -50,7 +50,7 @@ docker run -p 10099:10099 -v /path/to/your/config-template.yaml:/app/config-temp

```shell

git clone https://github.com/internlm/OpenAOE

```

-2. [_可选_] (如果前端代码发生变动)重新构建前端项目

+2. [_可选_] (如果前端代码发生变动)重新构建前端项目

```shell

cd open-aoe/openaoe/frontend

npm install

diff --git a/chat/web_demo.py b/chat/web_demo.py

index a5c160a..c2a18df 100644

--- a/chat/web_demo.py

+++ b/chat/web_demo.py

@@ -11,11 +11,10 @@ from dataclasses import asdict

import streamlit as st

import torch

+from tools.transformers.interface import GenerationConfig, generate_interactive

from transformers import AutoModelForCausalLM, AutoTokenizer

from transformers.utils import logging

-from tools.transformers.interface import GenerationConfig, generate_interactive

-

logger = logging.get_logger(__name__)

@@ -109,9 +108,15 @@ def main():

):

# Display robot response in chat message container

message_placeholder.markdown(cur_response + "▌")

- message_placeholder.markdown(cur_response)

+ message_placeholder.markdown(cur_response) # pylint: disable=undefined-loop-variable

# Add robot response to chat history

- st.session_state.messages.append({"role": "robot", "content": cur_response, "avatar": robot_avator})

+ st.session_state.messages.append(

+ {

+ "role": "robot",

+ "content": cur_response, # pylint: disable=undefined-loop-variable

+ "avatar": robot_avator,

+ }

+ )

torch.cuda.empty_cache()

diff --git a/model_cards/internlm2_20b.md b/model_cards/internlm2_20b.md

index 693c26c..cecd717 100644

--- a/model_cards/internlm2_20b.md

+++ b/model_cards/internlm2_20b.md

@@ -38,5 +38,5 @@ We have evaluated InternLM2 on several important benchmarks using the open-sourc

| MBPP(Sanitized) | 51.8 | 51.4 | 63.0 | 65.8 | 78.9 | 79.0 |

-- The evaluation results were obtained from [OpenCompass](https://github.com/open-compass/opencompass) , and evaluation configuration can be found in the configuration files provided by [OpenCompass](https://github.com/open-compass/opencompass).

+- The evaluation results were obtained from [OpenCompass](https://github.com/open-compass/opencompass) , and evaluation configuration can be found in the configuration files provided by [OpenCompass](https://github.com/open-compass/opencompass).

- The evaluation data may have numerical differences due to the version iteration of [OpenCompass](https://github.com/open-compass/opencompass), so please refer to the latest evaluation results of [OpenCompass](https://github.com/open-compass/opencompass).

diff --git a/model_cards/internlm2_7b.md b/model_cards/internlm2_7b.md

index 89da02f..5abd4b7 100644

--- a/model_cards/internlm2_7b.md

+++ b/model_cards/internlm2_7b.md

@@ -38,5 +38,5 @@ We have evaluated InternLM2 on several important benchmarks using the open-sourc

| MBPP(Sanitized) | 51.8 | 51.4 | 63.0 | 65.8 | 78.9 | 79.0 |

-- The evaluation results were obtained from [OpenCompass](https://github.com/open-compass/opencompass) , and evaluation configuration can be found in the configuration files provided by [OpenCompass](https://github.com/open-compass/opencompass).

+- The evaluation results were obtained from [OpenCompass](https://github.com/open-compass/opencompass) , and evaluation configuration can be found in the configuration files provided by [OpenCompass](https://github.com/open-compass/opencompass).

- The evaluation data may have numerical differences due to the version iteration of [OpenCompass](https://github.com/open-compass/opencompass), so please refer to the latest evaluation results of [OpenCompass](https://github.com/open-compass/opencompass).