mirror of https://github.com/hpcaitech/ColossalAI

[Refactor] remove useless inference code (#5022)

* remove useless code * fix quant model * fix test import bug * mv original inference legacy * fix chatglm2pull/5035/head

parent

81b8f5e76a

commit

c6295c3381

|

|

@ -9,8 +9,8 @@ from colossalai.pipeline.stage_manager import PipelineStageManager

|

|||

from colossalai.shardformer import ShardConfig, ShardFormer

|

||||

from colossalai.shardformer.policies.base_policy import Policy

|

||||

|

||||

from ..pipeline.microbatch_manager import MicroBatchManager

|

||||

from ..tensor_parallel.kvcache_manager import MemoryManager

|

||||

from ..kvcache_manager import MemoryManager

|

||||

from .microbatch_manager import MicroBatchManager

|

||||

|

||||

PP_AXIS, TP_AXIS = 0, 1

|

||||

|

||||

|

|

|

|||

|

|

@ -0,0 +1,248 @@

|

|||

from enum import Enum

|

||||

from typing import Dict

|

||||

|

||||

import torch

|

||||

|

||||

from ..kvcache_manager import BatchInferState, MemoryManager

|

||||

|

||||

__all__ = "MicroBatchManager"

|

||||

|

||||

|

||||

class Status(Enum):

|

||||

PREFILL = 1

|

||||

GENERATE = 2

|

||||

DONE = 3

|

||||

COOLDOWN = 4

|

||||

|

||||

|

||||

class MicroBatchDescription:

|

||||

"""

|

||||

This is the class to record the infomation of each microbatch, and also do some update operation.

|

||||

This clase is the base class of `HeadMicroBatchDescription` and `BodyMicroBatchDescription`, for more

|

||||

details, please refer to the doc of these two classes blow.

|

||||

|

||||

Args:

|

||||

inputs_dict (Dict[str, torch.Tensor]): the inputs of current stage. The key should have `input_ids` and `attention_mask`.

|

||||

output_dict (Dict[str, torch.Tensor]): the outputs of previous stage. The key should have `hidden_states` and `past_key_values`.

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

inputs_dict: Dict[str, torch.Tensor],

|

||||

max_input_len: int,

|

||||

max_output_len: int,

|

||||

cache_manager: MemoryManager,

|

||||

) -> None:

|

||||

self.mb_length = inputs_dict["input_ids"].shape[-1]

|

||||

self.target_length = self.mb_length + max_output_len

|

||||

self.infer_state = BatchInferState.init_from_batch(

|

||||

batch=inputs_dict, max_input_len=max_input_len, max_output_len=max_output_len, cache_manager=cache_manager

|

||||

)

|

||||

# print(f"[init] {inputs_dict}, {max_input_len}, {max_output_len}, {cache_manager}, {self.infer_state}")

|

||||

|

||||

def update(self, *args, **kwargs):

|

||||

pass

|

||||

|

||||

@property

|

||||

def state(self):

|

||||

"""

|

||||

Return the state of current micro batch, when current length is equal to target length,

|

||||

the state is DONE, otherwise GENERATE

|

||||

|

||||

"""

|

||||

# TODO: add the condition for early stopping

|

||||

if self.cur_length == self.target_length:

|

||||

return Status.DONE

|

||||

elif self.cur_length == self.target_length - 1:

|

||||

return Status.COOLDOWN

|

||||

else:

|

||||

return Status.GENERATE

|

||||

|

||||

@property

|

||||

def cur_length(self):

|

||||

"""

|

||||

Return the current sequnence length of micro batch

|

||||

|

||||

"""

|

||||

|

||||

|

||||

class HeadMicroBatchDescription(MicroBatchDescription):

|

||||

"""

|

||||

This class is used to record the infomation of the first stage of pipeline, the first stage should have attributes `input_ids` and `attention_mask`

|

||||

and `new_tokens`, and the `new_tokens` is the tokens generated by the first stage. Also due to the schdule of pipeline, the operation to update the

|

||||

information and the condition to determine the state is different from other stages.

|

||||

|

||||

Args:

|

||||

inputs_dict (Dict[str, torch.Tensor]): the inputs of current stage. The key should have `input_ids` and `attention_mask`.

|

||||

output_dict (Dict[str, torch.Tensor]): the outputs of previous stage. The key should have `hidden_states` and `past_key_values`.

|

||||

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

inputs_dict: Dict[str, torch.Tensor],

|

||||

max_input_len: int,

|

||||

max_output_len: int,

|

||||

cache_manager: MemoryManager,

|

||||

) -> None:

|

||||

super().__init__(inputs_dict, max_input_len, max_output_len, cache_manager)

|

||||

assert inputs_dict is not None

|

||||

assert inputs_dict.get("input_ids") is not None and inputs_dict.get("attention_mask") is not None

|

||||

self.input_ids = inputs_dict["input_ids"]

|

||||

self.attn_mask = inputs_dict["attention_mask"]

|

||||

self.new_tokens = None

|

||||

|

||||

def update(self, new_token: torch.Tensor = None):

|

||||

if new_token is not None:

|

||||

self._update_newtokens(new_token)

|

||||

if self.state is not Status.DONE and new_token is not None:

|

||||

self._update_attnmask()

|

||||

|

||||

def _update_newtokens(self, new_token: torch.Tensor):

|

||||

if self.new_tokens is None:

|

||||

self.new_tokens = new_token

|

||||

else:

|

||||

self.new_tokens = torch.cat([self.new_tokens, new_token], dim=-1)

|

||||

|

||||

def _update_attnmask(self):

|

||||

self.attn_mask = torch.cat(

|

||||

(self.attn_mask, torch.ones((self.attn_mask.shape[0], 1), dtype=torch.int64, device="cuda")), dim=-1

|

||||

)

|

||||

|

||||

@property

|

||||

def cur_length(self):

|

||||

"""

|

||||

When there is no new_token, the length is mb_length, otherwise the sequence length is `mb_length` plus the length of new_token

|

||||

|

||||

"""

|

||||

if self.new_tokens is None:

|

||||

return self.mb_length

|

||||

else:

|

||||

return self.mb_length + len(self.new_tokens[0])

|

||||

|

||||

|

||||

class BodyMicroBatchDescription(MicroBatchDescription):

|

||||

"""

|

||||

This class is used to record the infomation of the stages except the first stage of pipeline, the stages should have attributes `hidden_states` and `past_key_values`,

|

||||

|

||||

Args:

|

||||

inputs_dict (Dict[str, torch.Tensor]): will always be `None`. Other stages only receive hiddenstates from previous stage.

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

inputs_dict: Dict[str, torch.Tensor],

|

||||

max_input_len: int,

|

||||

max_output_len: int,

|

||||

cache_manager: MemoryManager,

|

||||

) -> None:

|

||||

super().__init__(inputs_dict, max_input_len, max_output_len, cache_manager)

|

||||

|

||||

@property

|

||||

def cur_length(self):

|

||||

"""

|

||||

When there is no kv_cache, the length is mb_length, otherwise the sequence length is `kv_cache[0][0].shape[-2]` plus 1

|

||||

|

||||

"""

|

||||

return self.infer_state.seq_len.max().item()

|

||||

|

||||

|

||||

class MicroBatchManager:

|

||||

"""

|

||||

MicroBatchManager is a class that manages the micro batch.

|

||||

|

||||

Args:

|

||||

stage (int): stage id of current stage.

|

||||

micro_batch_size (int): the micro batch size.

|

||||

micro_batch_buffer_size (int): the buffer size for micro batch. Normally, it should be the same as the number of pipeline stages.

|

||||

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

stage: int,

|

||||

micro_batch_size: int,

|

||||

micro_batch_buffer_size: int,

|

||||

max_input_len: int,

|

||||

max_output_len: int,

|

||||

cache_manager_list: MemoryManager,

|

||||

):

|

||||

self.stage = stage

|

||||

self.micro_batch_size = micro_batch_size

|

||||

self.buffer_size = micro_batch_buffer_size

|

||||

self.max_input_len = max_input_len

|

||||

self.max_output_len = max_output_len

|

||||

self.cache_manager_list = cache_manager_list

|

||||

self.mb_descrption_buffer = {}

|

||||

self.new_tokens_buffer = {}

|

||||

self.idx = 0

|

||||

|

||||

def add_descrption(self, inputs_dict: Dict[str, torch.Tensor]):

|

||||

if self.stage == 0:

|

||||

self.mb_descrption_buffer[self.idx] = HeadMicroBatchDescription(

|

||||

inputs_dict, self.max_input_len, self.max_output_len, self.cache_manager_list[self.idx]

|

||||

)

|

||||

else:

|

||||

self.mb_descrption_buffer[self.idx] = BodyMicroBatchDescription(

|

||||

inputs_dict, self.max_input_len, self.max_output_len, self.cache_manager_list[self.idx]

|

||||

)

|

||||

|

||||

def step(self, new_token: torch.Tensor = None):

|

||||

"""

|

||||

Update the state if microbatch manager, 2 conditions.

|

||||

1. For first stage in PREFILL, receive inputs and outputs, `_add_descrption` will save its inputs.

|

||||

2. For other conditon, only receive the output of previous stage, and update the descrption.

|

||||

|

||||

Args:

|

||||

inputs_dict (Dict[str, torch.Tensor]): the inputs of current stage. The key should have `input_ids` and `attention_mask`.

|

||||

output_dict (Dict[str, torch.Tensor]): the outputs of previous stage. The key should have `hidden_states` and `past_key_values`.

|

||||

new_token (torch.Tensor): the new token generated by current stage.

|

||||

"""

|

||||

# Add descrption first if the descrption is None

|

||||

self.cur_descrption.update(new_token)

|

||||

return self.cur_state

|

||||

|

||||

def export_new_tokens(self):

|

||||

new_tokens_list = []

|

||||

for i in self.mb_descrption_buffer.values():

|

||||

new_tokens_list.extend(i.new_tokens.tolist())

|

||||

return new_tokens_list

|

||||

|

||||

def is_micro_batch_done(self):

|

||||

if len(self.mb_descrption_buffer) == 0:

|

||||

return False

|

||||

for mb in self.mb_descrption_buffer.values():

|

||||

if mb.state != Status.DONE:

|

||||

return False

|

||||

return True

|

||||

|

||||

def clear(self):

|

||||

self.mb_descrption_buffer.clear()

|

||||

for cache in self.cache_manager_list:

|

||||

cache.free_all()

|

||||

|

||||

def next(self):

|

||||

self.idx = (self.idx + 1) % self.buffer_size

|

||||

|

||||

def _remove_descrption(self):

|

||||

self.mb_descrption_buffer.pop(self.idx)

|

||||

|

||||

@property

|

||||

def cur_descrption(self) -> MicroBatchDescription:

|

||||

return self.mb_descrption_buffer.get(self.idx)

|

||||

|

||||

@property

|

||||

def cur_infer_state(self):

|

||||

if self.cur_descrption is None:

|

||||

return None

|

||||

return self.cur_descrption.infer_state

|

||||

|

||||

@property

|

||||

def cur_state(self):

|

||||

"""

|

||||

Return the state of current micro batch, when current descrption is None, the state is PREFILL

|

||||

|

||||

"""

|

||||

if self.cur_descrption is None:

|

||||

return Status.PREFILL

|

||||

return self.cur_descrption.state

|

||||

|

|

@ -1,4 +1,5 @@

|

|||

from .bloom import BloomInferenceForwards

|

||||

from .chatglm2 import ChatGLM2InferenceForwards

|

||||

from .llama import LlamaInferenceForwards

|

||||

|

||||

__all__ = ["LlamaInferenceForwards", "BloomInferenceForwards"]

|

||||

__all__ = ["LlamaInferenceForwards", "BloomInferenceForwards", "ChatGLM2InferenceForwards"]

|

||||

|

|

|

|||

|

|

@ -14,7 +14,7 @@ from transformers.models.bloom.modeling_bloom import (

|

|||

)

|

||||

from transformers.utils import logging

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.inference.kvcache_manager.batch_infer_state import BatchInferState

|

||||

from colossalai.kernel.triton import bloom_context_attn_fwd, copy_kv_cache_to_dest, token_attention_fwd

|

||||

from colossalai.pipeline.stage_manager import PipelineStageManager

|

||||

|

||||

|

|

|

|||

|

|

@ -3,7 +3,7 @@ from typing import List, Optional, Tuple

|

|||

import torch

|

||||

from transformers.utils import logging

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.inference.kvcache_manager import BatchInferState

|

||||

from colossalai.kernel.triton.token_attention_kernel import Llama2TokenAttentionForwards

|

||||

from colossalai.pipeline.stage_manager import PipelineStageManager

|

||||

from colossalai.shardformer import ShardConfig

|

||||

|

|

|

|||

|

|

@ -6,7 +6,7 @@ import torch

|

|||

from transformers.models.llama.modeling_llama import LlamaAttention, LlamaDecoderLayer, LlamaForCausalLM, LlamaModel

|

||||

from transformers.utils import logging

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.inference.kvcache_manager.batch_infer_state import BatchInferState

|

||||

from colossalai.kernel.triton import llama_context_attn_fwd, token_attention_fwd

|

||||

from colossalai.kernel.triton.token_attention_kernel import Llama2TokenAttentionForwards

|

||||

from colossalai.pipeline.stage_manager import PipelineStageManager

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

from .bloom import BloomModelInferPolicy

|

||||

from .chatglm import ChatGLM2InferPolicy

|

||||

from .chatglm2 import ChatGLM2InferPolicy

|

||||

from .llama import LlamaModelInferPolicy

|

||||

|

||||

__all__ = ["LlamaModelInferPolicy", "BloomModelInferPolicy", "ChatGLM2InferPolicy"]

|

||||

|

|

|

|||

|

|

@ -0,0 +1,2 @@

|

|||

from .batch_infer_state import BatchInferState

|

||||

from .kvcache_manager import MemoryManager

|

||||

|

|

@ -20,8 +20,7 @@ from transformers.modeling_utils import no_init_weights

|

|||

from transformers.utils.generic import ContextManagers

|

||||

from transformers.utils.hub import PushToHubMixin, cached_file

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.inference.tensor_parallel.kvcache_manager import MemoryManager

|

||||

from colossalai.inference.kvcache_manager.batch_infer_state import BatchInferState, MemoryManager

|

||||

|

||||

try:

|

||||

import accelerate

|

||||

|

|

|

|||

|

|

@ -21,7 +21,7 @@ from transformers.models.llama.modeling_llama import (

|

|||

)

|

||||

from transformers.utils import add_start_docstrings_to_model_forward

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.inference.kvcache_manager.batch_infer_state import BatchInferState

|

||||

from colossalai.kernel.triton import (

|

||||

copy_kv_cache_to_dest,

|

||||

int8_rotary_embedding_fwd,

|

||||

|

|

|

|||

|

|

@ -0,0 +1,143 @@

|

|||

# 🚀 Colossal-Inference

|

||||

|

||||

## Table of contents

|

||||

|

||||

## Introduction

|

||||

|

||||

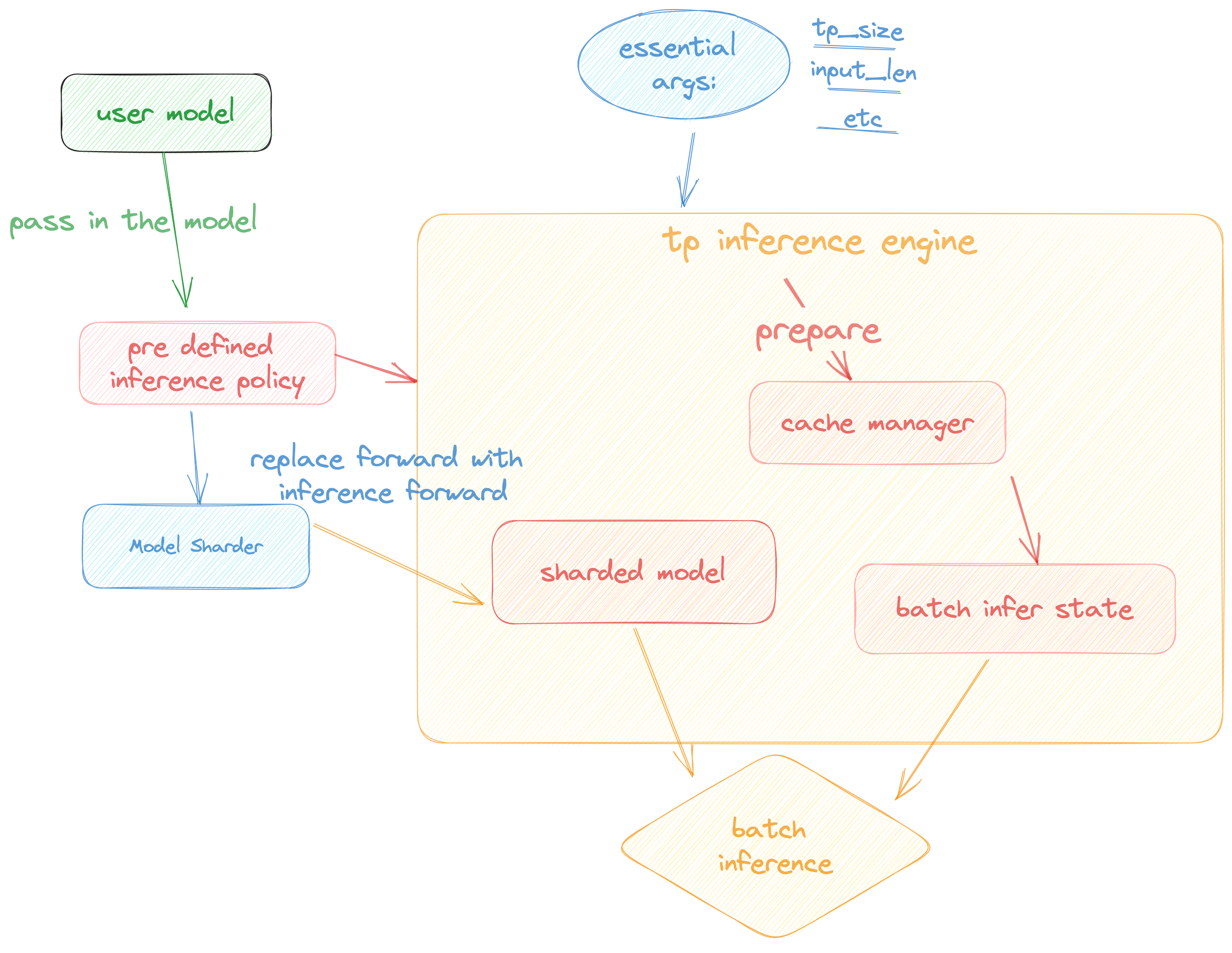

`Colossal Inference` is a module that contains colossal-ai designed inference framework, featuring high performance, steady and easy usability. `Colossal Inference` incorporated the advantages of the latest open-source inference systems, including LightLLM, TGI, vLLM, FasterTransformer and flash attention. while combining the design of Colossal AI, especially Shardformer, to reduce the learning curve for users.

|

||||

|

||||

## Design

|

||||

|

||||

Colossal Inference is composed of two main components:

|

||||

|

||||

1. High performance kernels and ops: which are inspired from existing libraries and modified correspondingly.

|

||||

2. Efficient memory management mechanism:which includes the key-value cache manager, allowing for zero memory waste during inference.

|

||||

1. `cache manager`: serves as a memory manager to help manage the key-value cache, it integrates functions such as memory allocation, indexing and release.

|

||||

2. `batch_infer_info`: holds all essential elements of a batch inference, which is updated every batch.

|

||||

3. High-level inference engine combined with `Shardformer`: it allows our inference framework to easily invoke and utilize various parallel methods.

|

||||

1. `engine.TPInferEngine`: it is a high level interface that integrates with shardformer, especially for multi-card (tensor parallel) inference:

|

||||

2. `modeling.llama.LlamaInferenceForwards`: contains the `forward` methods for llama inference. (in this case : llama)

|

||||

3. `policies.llama.LlamaModelInferPolicy` : contains the policies for `llama` models, which is used to call `shardformer` and segmentate the model forward in tensor parallelism way.

|

||||

|

||||

## Pipeline of inference:

|

||||

|

||||

In this section we discuss how the colossal inference works and integrates with the `Shardformer` . The details can be found in our codes.

|

||||

|

||||

|

||||

|

||||

## Roadmap of our implementation

|

||||

|

||||

- [x] Design cache manager and batch infer state

|

||||

- [x] Design TpInference engine to integrates with `Shardformer`

|

||||

- [x] Register corresponding high-performance `kernel` and `ops`

|

||||

- [x] Design policies and forwards (e.g. `Llama` and `Bloom`)

|

||||

- [x] policy

|

||||

- [x] context forward

|

||||

- [x] token forward

|

||||

- [x] support flash-decoding

|

||||

- [ ] Replace the kernels with `faster-transformer` in token-forward stage

|

||||

- [ ] Support all models

|

||||

- [x] Llama

|

||||

- [x] Llama-2

|

||||

- [x] Bloom

|

||||

- [x] Chatglm2

|

||||

- [ ] Benchmarking for all models

|

||||

|

||||

## Get started

|

||||

|

||||

### Installation

|

||||

|

||||

```bash

|

||||

pip install -e .

|

||||

```

|

||||

|

||||

### Requirements

|

||||

|

||||

dependencies

|

||||

|

||||

```bash

|

||||

pytorch= 1.13.1 (gpu)

|

||||

cuda>= 11.6

|

||||

transformers= 4.30.2

|

||||

triton

|

||||

# for install flash-attention

|

||||

flash-attention

|

||||

|

||||

# install lightllm since we depend on lightllm triton kernels

|

||||

git clone https://github.com/ModelTC/lightllm

|

||||

cd lightllm

|

||||

git checkout 28c1267cfca536b7b4f28e921e03de735b003039

|

||||

pip3 install -e .

|

||||

|

||||

# also, install xformers from source:

|

||||

pip install ninja

|

||||

# Set TORCH_CUDA_ARCH_LIST if running and building on different GPU types

|

||||

pip install -v -U git+https://github.com/facebookresearch/xformers.git@main#egg=xformers

|

||||

|

||||

```

|

||||

|

||||

### Docker

|

||||

|

||||

You can use docker run to use docker container to set-up environment

|

||||

|

||||

```

|

||||

# env: python==3.8, cuda 11.6, pytorch == 1.13.1 triton==2.0.0.dev20221202, vllm kernels support, flash-attention-2 kernels support

|

||||

docker pull hpcaitech/colossalai-inference:v2

|

||||

docker run -it --gpus all --name ANY_NAME -v $PWD:/workspace -w /workspace hpcaitech/colossalai-inference:v2 /bin/bash

|

||||

|

||||

# enter into docker container

|

||||

cd /path/to/CollossalAI

|

||||

pip install -e .

|

||||

|

||||

# install lightllm

|

||||

git clone https://github.com/ModelTC/lightllm

|

||||

cd lightllm

|

||||

git checkout 28c1267cfca536b7b4f28e921e03de735b003039

|

||||

pip3 install -e .

|

||||

|

||||

# install xformers from source

|

||||

pip install ninja

|

||||

# Set TORCH_CUDA_ARCH_LIST if running and building on different GPU types

|

||||

pip install -v -U git+https://github.com/facebookresearch/xformers.git@main#egg=xformers

|

||||

```

|

||||

|

||||

### Dive into fast-inference!

|

||||

|

||||

example files are in

|

||||

|

||||

```bash

|

||||

cd colossalai.examples

|

||||

python xx

|

||||

```

|

||||

|

||||

## Performance

|

||||

|

||||

### environment:

|

||||

|

||||

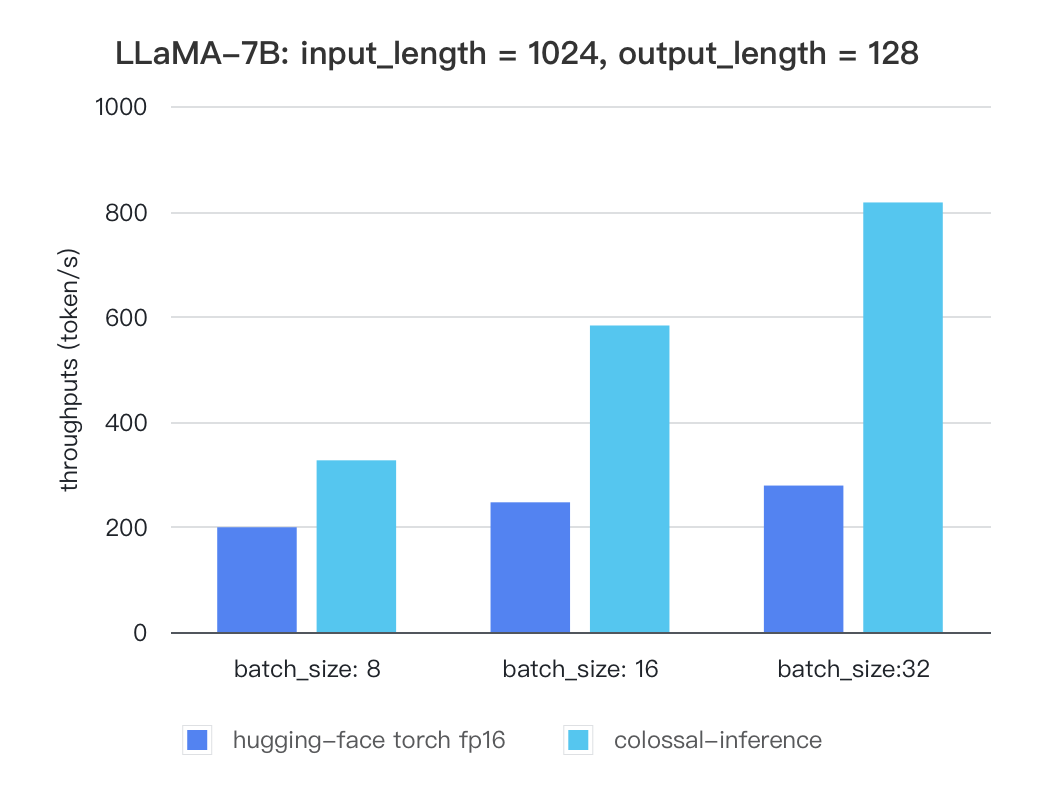

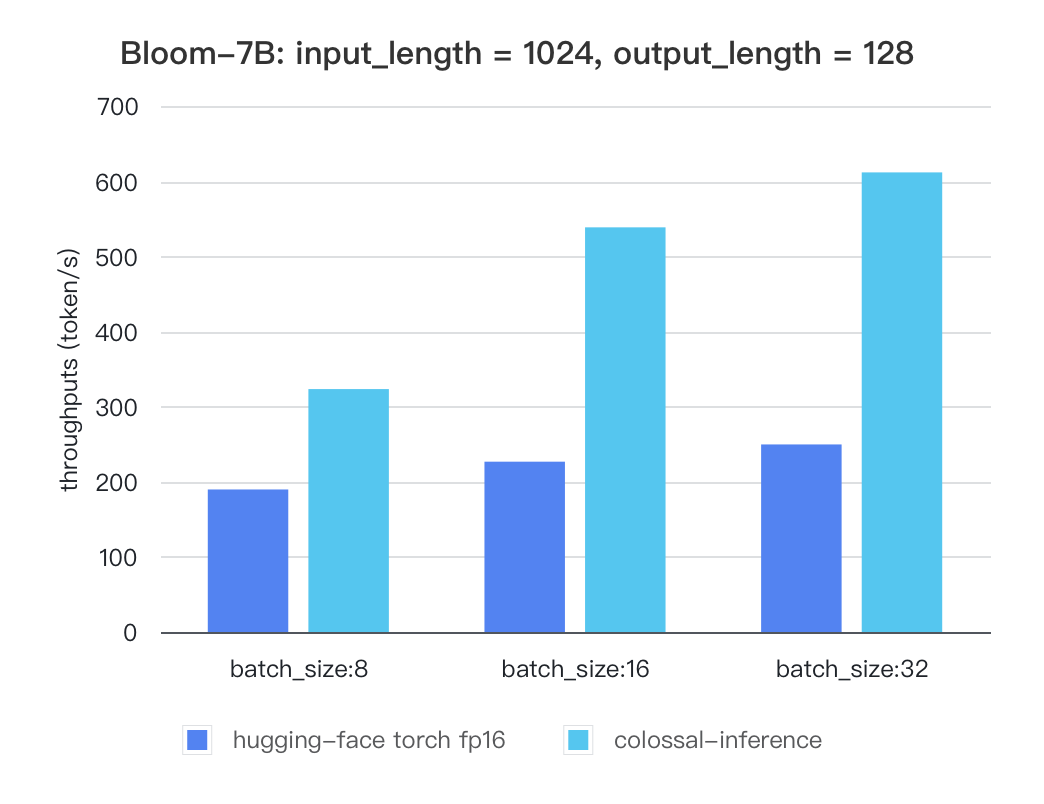

We conducted multiple benchmark tests to evaluate the performance. We compared the inference `latency` and `throughputs` between `colossal-inference` and original `hugging-face torch fp16`.

|

||||

|

||||

For various models, experiments were conducted using multiple batch sizes under the consistent model configuration of `7 billion(7b)` parameters, `1024` input length, and 128 output length. The obtained results are as follows (due to time constraints, the evaluation has currently been performed solely on the `A100` single GPU performance; multi-GPU performance will be addressed in the future):

|

||||

|

||||

### Single GPU Performance:

|

||||

|

||||

Currently the stats below are calculated based on A100 (single GPU), and we calculate token latency based on average values of context-forward and decoding forward process, which means we combine both of processes to calculate token generation times. We are actively developing new features and methods to further optimize the performance of LLM models. Please stay tuned.

|

||||

|

||||

#### Llama

|

||||

|

||||

| batch_size | 8 | 16 | 32 |

|

||||

| :---------------------: | :----: | :----: | :----: |

|

||||

| hugging-face torch fp16 | 199.12 | 246.56 | 278.4 |

|

||||

| colossal-inference | 326.4 | 582.72 | 816.64 |

|

||||

|

||||

|

||||

|

||||

### Bloom

|

||||

|

||||

| batch_size | 8 | 16 | 32 |

|

||||

| :---------------------: | :----: | :----: | :----: |

|

||||

| hugging-face torch fp16 | 189.68 | 226.66 | 249.61 |

|

||||

| colossal-inference | 323.28 | 538.52 | 611.64 |

|

||||

|

||||

|

||||

|

||||

The results of more models are coming soon!

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

from .hybridengine import CaiInferEngine

|

||||

from .hybridengine.polices import LlamaModelInferPolicy

|

||||

|

||||

__all__ = ["CaiInferEngine", "LlamaModelInferPolicy"]

|

||||

|

|

@ -0,0 +1,3 @@

|

|||

from .engine import CaiInferEngine

|

||||

|

||||

__all__ = ["CaiInferEngine"]

|

||||

|

|

@ -0,0 +1,170 @@

|

|||

import torch

|

||||

import torch.distributed as dist

|

||||

import torch.nn as nn

|

||||

from transformers.tokenization_utils_base import BatchEncoding

|

||||

|

||||

from colossalai.cluster import ProcessGroupMesh

|

||||

from colossalai.pipeline.schedule.generate import GenerateSchedule

|

||||

from colossalai.pipeline.stage_manager import PipelineStageManager

|

||||

from colossalai.shardformer import ShardConfig, ShardFormer

|

||||

from colossalai.shardformer.policies.base_policy import Policy

|

||||

|

||||

from ..pipeline.microbatch_manager import MicroBatchManager

|

||||

from ..tensor_parallel.kvcache_manager import MemoryManager

|

||||

|

||||

PP_AXIS, TP_AXIS = 0, 1

|

||||

|

||||

_supported_models = [

|

||||

"LlamaForCausalLM",

|

||||

]

|

||||

|

||||

|

||||

class CaiInferEngine:

|

||||

"""

|

||||

CaiInferEngine is a class that handles the pipeline parallel inference.

|

||||

|

||||

Args:

|

||||

tp_size (int): the size of tensor parallelism.

|

||||

pp_size (int): the size of pipeline parallelism.

|

||||

model (`nn.Module`): the model not in pipeline style, and will be modified with `ShardFormer`.

|

||||

model_policy (`colossalai.shardformer.policies.base_policy.Policy`): the policy to shardformer model.

|

||||

micro_batch_size (int): the micro batch size.

|

||||

micro_batch_buffer_size (int): the buffer size for micro batch. Normally, it should be the same as the number of pipeline stages.

|

||||

max_batch_size (int): the maximum batch size.

|

||||

max_input_len (int): the maximum input length.

|

||||

max_output_len (int): the maximum output length.

|

||||

|

||||

Example:

|

||||

|

||||

```python

|

||||

from colossalai.inference import InferEngine

|

||||

from colossalai.inference.pipeline.policies import LlamaModelInferPolicy

|

||||

import colossalai

|

||||

from transformers import LlamaForCausalLM, LlamaTokenizer

|

||||

|

||||

colossalai.launch_from_torch(config={})

|

||||

|

||||

model = LlamaForCausalLM.from_pretrained("your_path_to_model")

|

||||

tokenizer = LlamaTokenizer.from_pretrained("/home/lczyh/share/models/llama-7b-hf")

|

||||

# assume the model is infered with 2 pipeline stages

|

||||

inferengine = CaiInferEngine(pp_size=2, model=model, model_policy=LlamaModelInferPolicy())

|

||||

|

||||

input = ["Introduce a landmark in China ","Introduce a landmark in China "]

|

||||

data = tokenizer(input, return_tensors='pt')

|

||||

output = inferengine.inference([data.to('cuda').data])

|

||||

|

||||

```

|

||||

|

||||

"""

|

||||

|

||||

def __init__(

|

||||

self,

|

||||

tp_size: int = 1,

|

||||

pp_size: int = 1,

|

||||

dtype: str = "fp16",

|

||||

model: nn.Module = None,

|

||||

model_policy: Policy = None,

|

||||

micro_batch_size: int = 1,

|

||||

micro_batch_buffer_size: int = None,

|

||||

max_batch_size: int = 4,

|

||||

max_input_len: int = 32,

|

||||

max_output_len: int = 32,

|

||||

verbose: bool = False,

|

||||

# TODO: implement early_stopping, and various gerneration options

|

||||

early_stopping: bool = False,

|

||||

do_sample: bool = False,

|

||||

num_beams: int = 1,

|

||||

) -> None:

|

||||

assert model.__class__.__name__ in _supported_models, f"Model {model.__class__.__name__} is not supported."

|

||||

assert (

|

||||

tp_size * pp_size == dist.get_world_size()

|

||||

), f"TP size({tp_size}) * PP size({pp_size}) should be equal to the global world size ({dist.get_world_size()})"

|

||||

assert model and model_policy, "Model with model_policy should be provided."

|

||||

assert dtype in ["fp16", "fp32", "bf16"], "dtype should be one of 'fp16', 'fp32', 'bf16'"

|

||||

|

||||

assert max_batch_size <= 64, "Max batch size exceeds the constraint"

|

||||

assert max_input_len + max_output_len <= 4096, "Max length exceeds the constraint"

|

||||

|

||||

# TODO: support only tensor parallel inference

|

||||

assert pp_size > 1, "Not support only tensor parallel inference."

|

||||

self.pp_size = pp_size

|

||||

self.tp_size = tp_size

|

||||

|

||||

if dtype == "fp16":

|

||||

self.dtype = torch.float16

|

||||

model.half()

|

||||

elif dtype == "bf16":

|

||||

self.dtype = torch.bfloat16

|

||||

model.to(torch.bfloat16)

|

||||

else:

|

||||

self.dtype = torch.float32

|

||||

|

||||

# Init pg mesh

|

||||

pg_mesh = ProcessGroupMesh(pp_size, tp_size)

|

||||

|

||||

stage_manager = None

|

||||

if pp_size > 1:

|

||||

stage_manager = PipelineStageManager(pg_mesh, PP_AXIS, True)

|

||||

self.cache_manager_list = [

|

||||

self._init_manager(model, max_batch_size, max_input_len, max_output_len)

|

||||

for _ in range(micro_batch_buffer_size or pp_size)

|

||||

]

|

||||

self.mb_manager = MicroBatchManager(

|

||||

stage_manager.stage,

|

||||

micro_batch_size,

|

||||

micro_batch_buffer_size or pp_size,

|

||||

max_input_len,

|

||||

max_output_len,

|

||||

self.cache_manager_list,

|

||||

)

|

||||

self.verbose = verbose

|

||||

self.schedule = GenerateSchedule(stage_manager, self.mb_manager, verbose)

|

||||

|

||||

self.model = self._shardformer(model, model_policy, stage_manager, pg_mesh.get_group_along_axis(TP_AXIS))

|

||||

|

||||

def inference(self, input_list):

|

||||

"""

|

||||

Args:

|

||||

input_list (list): a list of input data, each element is a `BatchEncoding` or `dict`.

|

||||

|

||||

Returns:

|

||||

out (list): a list of output data, each element is a list of token.

|

||||

timestamp (float): the time cost of the inference, only return when verbose is `True`.

|

||||

"""

|

||||

assert isinstance(

|

||||

input_list, (BatchEncoding, dict)

|

||||

), f"Only accept BatchEncoding or dict as input, but get {input_list.__class__.__name__}."

|

||||

if isinstance(input_list, BatchEncoding):

|

||||

input_list = input_list.data

|

||||

out, timestamp = self.schedule.generate_step(self.model, iter([input_list]))

|

||||

if self.verbose:

|

||||

return out, timestamp

|

||||

else:

|

||||

return out

|

||||

|

||||

def _shardformer(self, model, model_policy, stage_manager, tp_group):

|

||||

shardconfig = ShardConfig(

|

||||

tensor_parallel_process_group=tp_group,

|

||||

pipeline_stage_manager=stage_manager,

|

||||

enable_tensor_parallelism=False,

|

||||

enable_fused_normalization=False,

|

||||

enable_all_optimization=False,

|

||||

enable_flash_attention=False,

|

||||

enable_jit_fused=False,

|

||||

enable_sequence_parallelism=False,

|

||||

)

|

||||

shardformer = ShardFormer(shard_config=shardconfig)

|

||||

shard_model, _ = shardformer.optimize(model, model_policy)

|

||||

return shard_model.cuda()

|

||||

|

||||

def _init_manager(self, model, max_batch_size: int, max_input_len: int, max_output_len: int) -> None:

|

||||

max_total_token_num = max_batch_size * (max_input_len + max_output_len)

|

||||

head_dim = model.config.hidden_size // model.config.num_attention_heads

|

||||

head_num = model.config.num_attention_heads

|

||||

num_hidden_layers = (

|

||||

model.config.num_hidden_layers if hasattr(model.config, "num_hidden_layers") else model.config.num_layers

|

||||

)

|

||||

layer_num = num_hidden_layers // self.pp_size

|

||||

|

||||

cache_manager = MemoryManager(max_total_token_num, self.dtype, head_num, head_dim, layer_num)

|

||||

return cache_manager

|

||||

|

|

@ -0,0 +1,3 @@

|

|||

from .llama import LlamaInferenceForwards

|

||||

|

||||

__all__ = ["LlamaInferenceForwards"]

|

||||

|

|

@ -0,0 +1,489 @@

|

|||

# This code is adapted from huggingface transformers: https://github.com/huggingface/transformers/blob/v4.34.1/src/transformers/models/llama/modeling_llama.py

|

||||

import math

|

||||

from typing import List, Optional, Tuple

|

||||

|

||||

import torch

|

||||

from transformers.models.llama.modeling_llama import LlamaAttention, LlamaDecoderLayer, LlamaForCausalLM, LlamaModel

|

||||

from transformers.utils import logging

|

||||

|

||||

from colossalai.inference.tensor_parallel.batch_infer_state import BatchInferState

|

||||

from colossalai.kernel.triton import llama_context_attn_fwd, token_attention_fwd

|

||||

from colossalai.kernel.triton.token_attention_kernel import Llama2TokenAttentionForwards

|

||||

from colossalai.pipeline.stage_manager import PipelineStageManager

|

||||

|

||||

from ._utils import copy_kv_to_mem_cache

|

||||

|

||||

try:

|

||||

from lightllm.models.llama2.triton_kernel.context_flashattention_nopad import (

|

||||

context_attention_fwd as lightllm_llama2_context_attention_fwd,

|

||||

)

|

||||

from lightllm.models.llama.triton_kernel.context_flashattention_nopad import (

|

||||

context_attention_fwd as lightllm_context_attention_fwd,

|

||||

)

|

||||

from lightllm.models.llama.triton_kernel.rotary_emb import rotary_emb_fwd as llama_rotary_embedding_fwd

|

||||

|

||||

HAS_LIGHTLLM_KERNEL = True

|

||||

except:

|

||||

print("please install lightllm from source to run inference: https://github.com/ModelTC/lightllm")

|

||||

HAS_LIGHTLLM_KERNEL = False

|

||||

|

||||

try:

|

||||

from flash_attn import flash_attn_with_kvcache

|

||||

|

||||

HAS_FLASH_KERNEL = True

|

||||

except:

|

||||

HAS_FLASH_KERNEL = False

|

||||

print("please install flash attentiom from https://github.com/Dao-AILab/flash-attention")

|

||||

|

||||

|

||||

def rotate_half(x):

|

||||

"""Rotates half the hidden dims of the input."""

|

||||

x1 = x[..., : x.shape[-1] // 2]

|

||||

x2 = x[..., x.shape[-1] // 2 :]

|

||||

return torch.cat((-x2, x1), dim=-1)

|

||||

|

||||

|

||||

def apply_rotary_pos_emb(q, k, cos, sin, position_ids):

|

||||

# The first two dimensions of cos and sin are always 1, so we can `squeeze` them.

|

||||

cos = cos.squeeze(1).squeeze(0) # [seq_len, dim]

|

||||

sin = sin.squeeze(1).squeeze(0) # [seq_len, dim]

|

||||

cos = cos[position_ids].unsqueeze(1) # [bs, 1, seq_len, dim]

|

||||

sin = sin[position_ids].unsqueeze(1) # [bs, 1, seq_len, dim]

|

||||

|

||||

q_embed = (q * cos) + (rotate_half(q) * sin)

|

||||

k_embed = (k * cos) + (rotate_half(k) * sin)

|

||||

return q_embed, k_embed

|

||||

|

||||

|

||||

def llama_triton_context_attention(

|

||||

query_states, key_states, value_states, attn_output, infer_state, num_key_value_groups=1

|

||||

):

|

||||

if num_key_value_groups == 1:

|

||||

if HAS_LIGHTLLM_KERNEL is False:

|

||||

llama_context_attn_fwd(

|

||||

query_states,

|

||||

key_states,

|

||||

value_states,

|

||||

attn_output,

|

||||

infer_state.start_loc,

|

||||

infer_state.seq_len,

|

||||

# infer_state.cache_manager.past_key_values_length,

|

||||

infer_state.max_len_in_batch,

|

||||

)

|

||||

else:

|

||||

lightllm_context_attention_fwd(

|

||||

query_states,

|

||||

key_states,

|

||||

value_states,

|

||||

attn_output,

|

||||

infer_state.start_loc,

|

||||

infer_state.seq_len,

|

||||

# infer_state.cache_manager.past_key_values_length,

|

||||

infer_state.max_len_in_batch,

|

||||

)

|

||||

else:

|

||||

assert HAS_LIGHTLLM_KERNEL is True, "You have to install lightllm kernels to run llama2 model"

|

||||

lightllm_llama2_context_attention_fwd(

|

||||

query_states,

|

||||

key_states,

|

||||

value_states,

|

||||

attn_output,

|

||||

infer_state.start_loc,

|

||||

infer_state.seq_len,

|

||||

# infer_state.cache_manager.past_key_values_length,

|

||||

infer_state.max_len_in_batch,

|

||||

)

|

||||

|

||||

|

||||

def llama_triton_token_attention(query_states, attn_output, infer_state, num_key_value_groups=1):

|

||||

assert HAS_LIGHTLLM_KERNEL is True, "You have to install lightllm kernel to run token attention for llama models"

|

||||

if num_key_value_groups == 1:

|

||||

token_attention_fwd(

|

||||

query_states,

|

||||

infer_state.cache_manager.key_buffer[infer_state.decode_layer_id],

|

||||

infer_state.cache_manager.value_buffer[infer_state.decode_layer_id],

|

||||

attn_output,

|

||||

infer_state.block_loc,

|

||||

infer_state.start_loc,

|

||||

infer_state.seq_len,

|

||||

# infer_state.cache_manager.past_key_values_length,

|

||||

infer_state.max_len_in_batch,

|

||||

)

|

||||

else:

|

||||

Llama2TokenAttentionForwards.token_attn(

|

||||

query_states,

|

||||

infer_state.cache_manager.key_buffer[infer_state.decode_layer_id],

|

||||

infer_state.cache_manager.value_buffer[infer_state.decode_layer_id],

|

||||

attn_output,

|

||||

infer_state.block_loc,

|

||||

infer_state.start_loc,

|

||||

infer_state.seq_len,

|

||||

# infer_state.cache_manager.past_key_values_length,

|

||||

infer_state.max_len_in_batch,

|

||||

infer_state.other_kv_index,

|

||||

)

|

||||

|

||||

|

||||

class LlamaInferenceForwards:

|

||||

"""

|

||||

This class holds forwards for llama inference.

|

||||

We intend to replace the forward methods for LlamaModel, LlamaDecoderLayer, and LlamaAttention for LlamaForCausalLM.

|

||||

"""

|

||||

|

||||

@staticmethod

|

||||

def llama_causal_lm_forward(

|

||||

self: LlamaForCausalLM,

|

||||

input_ids: torch.LongTensor = None,

|

||||

attention_mask: Optional[torch.Tensor] = None,

|

||||

position_ids: Optional[torch.LongTensor] = None,

|

||||

past_key_values: Optional[List[torch.FloatTensor]] = None,

|

||||

inputs_embeds: Optional[torch.FloatTensor] = None,

|

||||

labels: Optional[torch.LongTensor] = None,

|

||||

use_cache: Optional[bool] = None,

|

||||

output_attentions: Optional[bool] = None,

|

||||

output_hidden_states: Optional[bool] = None,

|

||||

return_dict: Optional[bool] = None,

|

||||

infer_state: BatchInferState = None,

|

||||

stage_manager: Optional[PipelineStageManager] = None,

|

||||

hidden_states: Optional[torch.FloatTensor] = None,

|

||||

stage_index: Optional[List[int]] = None,

|

||||

):

|

||||

r"""

|

||||

Args:

|

||||

labels (`torch.LongTensor` of shape `(batch_size, sequence_length)`, *optional*):

|

||||

Labels for computing the masked language modeling loss. Indices should either be in `[0, ...,

|

||||

config.vocab_size]` or -100 (see `input_ids` docstring). Tokens with indices set to `-100` are ignored

|

||||

(masked), the loss is only computed for the tokens with labels in `[0, ..., config.vocab_size]`.

|

||||

|

||||

"""

|

||||

logger = logging.get_logger(__name__)

|

||||

|

||||

return_dict = return_dict if return_dict is not None else self.config.use_return_dict

|

||||

|

||||

if output_attentions:

|

||||

logger.warning_once("output_attentions=True is not supported for pipeline models at the moment.")

|

||||

output_attentions = False

|

||||

if output_hidden_states:

|

||||

logger.warning_once("output_hidden_states=True is not supported for pipeline models at the moment.")

|

||||

output_hidden_states = False

|

||||

|

||||

# If is first stage and after warmup, go throught lm_head first

|

||||

if stage_manager.is_first_stage() and hidden_states is not None:

|

||||

lm_logits = self.lm_head(hidden_states)

|

||||

return {"logits": lm_logits}

|

||||

|

||||

# decoder outputs consists of (dec_features, layer_state, dec_hidden, dec_attn)

|

||||

outputs = LlamaInferenceForwards.llama_model_forward(

|

||||

self.model,

|

||||

input_ids=input_ids,

|

||||

attention_mask=attention_mask,

|

||||

position_ids=position_ids,

|

||||

past_key_values=past_key_values,

|

||||

inputs_embeds=inputs_embeds,

|

||||

use_cache=use_cache,

|

||||

output_attentions=output_attentions,

|

||||

output_hidden_states=output_hidden_states,

|

||||

return_dict=return_dict,

|

||||

infer_state=infer_state,

|

||||

stage_manager=stage_manager,

|

||||

hidden_states=hidden_states,

|

||||

stage_index=stage_index,

|

||||

)

|

||||

|

||||

return outputs

|

||||

|

||||

@staticmethod

|

||||

def llama_model_forward(

|

||||

self: LlamaModel,

|

||||

input_ids: torch.LongTensor = None,

|

||||

attention_mask: Optional[torch.Tensor] = None,

|

||||

position_ids: Optional[torch.LongTensor] = None,

|

||||

past_key_values: Optional[List[torch.FloatTensor]] = None,

|

||||

inputs_embeds: Optional[torch.FloatTensor] = None,

|

||||

use_cache: Optional[bool] = None,

|

||||

output_attentions: Optional[bool] = None,

|

||||

output_hidden_states: Optional[bool] = None,

|

||||

return_dict: Optional[bool] = None,

|

||||

infer_state: BatchInferState = None,

|

||||

stage_manager: Optional[PipelineStageManager] = None,

|

||||

hidden_states: Optional[torch.FloatTensor] = None,

|

||||

stage_index: Optional[List[int]] = None,

|

||||

):

|

||||

return_dict = return_dict if return_dict is not None else self.config.use_return_dict

|

||||

|

||||

use_cache = use_cache if use_cache is not None else self.config.use_cache

|

||||

# retrieve input_ids and inputs_embeds

|

||||

if stage_manager is None or stage_manager.is_first_stage():

|

||||

if input_ids is not None and inputs_embeds is not None:

|

||||

raise ValueError("You cannot specify both decoder_input_ids and decoder_inputs_embeds at the same time")

|

||||

elif input_ids is not None:

|

||||

batch_size, seq_length = input_ids.shape

|

||||

elif inputs_embeds is not None:

|

||||

batch_size, seq_length, _ = inputs_embeds.shape

|

||||

else:

|

||||

raise ValueError("You have to specify either decoder_input_ids or decoder_inputs_embeds")

|

||||

device = input_ids.device if input_ids is not None else inputs_embeds.device

|

||||

if inputs_embeds is None:

|

||||

inputs_embeds = self.embed_tokens(input_ids)

|

||||

hidden_states = inputs_embeds

|

||||

else:

|

||||

assert stage_manager is not None

|

||||

assert hidden_states is not None, f"hidden_state should not be none in stage {stage_manager.stage}"

|

||||

input_shape = hidden_states.shape[:-1]

|

||||

batch_size, seq_length = input_shape

|

||||

device = hidden_states.device

|

||||

|

||||

if infer_state.is_context_stage:

|

||||

past_key_values_length = 0

|

||||

else:

|

||||

past_key_values_length = infer_state.max_len_in_batch - 1

|

||||

|

||||

# NOTE: differentiate with prefill stage

|

||||

# block_loc require different value-assigning method for two different stage

|

||||

if use_cache and seq_length != 1:

|

||||

# NOTE assume prefill stage

|

||||

# allocate memory block

|

||||

infer_state.is_context_stage = True # set prefill stage, notify attention layer

|

||||

infer_state.context_mem_index = infer_state.cache_manager.alloc(infer_state.total_token_num)

|

||||

infer_state.init_block_loc(

|

||||

infer_state.block_loc, infer_state.seq_len, seq_length, infer_state.context_mem_index

|

||||

)

|

||||

else:

|

||||

infer_state.is_context_stage = False

|

||||

alloc_mem = infer_state.cache_manager.alloc_contiguous(batch_size)

|

||||

if alloc_mem is not None:

|

||||

infer_state.decode_is_contiguous = True

|

||||

infer_state.decode_mem_index = alloc_mem[0]

|

||||

infer_state.decode_mem_start = alloc_mem[1]

|

||||

infer_state.decode_mem_end = alloc_mem[2]

|

||||

infer_state.block_loc[:, infer_state.max_len_in_batch - 1] = infer_state.decode_mem_index

|

||||

else:

|

||||

infer_state.decode_is_contiguous = False

|

||||

alloc_mem = infer_state.cache_manager.alloc(batch_size)

|

||||

infer_state.decode_mem_index = alloc_mem

|

||||

infer_state.block_loc[:, infer_state.max_len_in_batch - 1] = infer_state.decode_mem_index

|

||||

|

||||

if position_ids is None:

|

||||

position_ids = torch.arange(

|

||||

past_key_values_length, seq_length + past_key_values_length, dtype=torch.long, device=device

|

||||

)

|

||||

position_ids = position_ids.repeat(batch_size, 1)

|

||||

position_ids = position_ids.unsqueeze(0).view(-1, seq_length)

|

||||

else:

|

||||

position_ids = position_ids.view(-1, seq_length).long()

|

||||

|

||||

if infer_state.is_context_stage:

|

||||

infer_state.position_cos = torch.index_select(self._cos_cached, 0, position_ids.view(-1)).view(

|

||||

position_ids.view(-1).shape[0], -1

|

||||

)

|

||||

infer_state.position_sin = torch.index_select(self._sin_cached, 0, position_ids.view(-1)).view(

|

||||

position_ids.view(-1).shape[0], -1

|

||||

)

|

||||

|

||||

else:

|

||||

seq_len = infer_state.seq_len

|

||||

infer_state.position_cos = torch.index_select(self._cos_cached, 0, seq_len - 1).view(seq_len.shape[0], -1)

|

||||

infer_state.position_sin = torch.index_select(self._sin_cached, 0, seq_len - 1).view(seq_len.shape[0], -1)

|

||||

infer_state.other_kv_index = infer_state.block_loc[0, infer_state.max_len_in_batch - 1].item()

|

||||

|

||||

# embed positions

|

||||

if attention_mask is None:

|

||||

attention_mask = torch.ones(

|

||||

(batch_size, infer_state.max_len_in_batch), dtype=torch.bool, device=hidden_states.device

|

||||

)

|

||||

|

||||

attention_mask = self._prepare_decoder_attention_mask(

|

||||

attention_mask, (batch_size, seq_length), hidden_states, past_key_values_length

|

||||

)

|

||||

|

||||

# decoder layers

|

||||

infer_state.decode_layer_id = 0

|

||||

|

||||

start_idx, end_idx = stage_index[0], stage_index[1]

|

||||

if past_key_values is None:

|

||||

past_key_values = tuple([None] * (end_idx - start_idx + 1))

|

||||

|

||||

for idx, past_key_value in zip(range(start_idx, end_idx), past_key_values):

|

||||

decoder_layer = self.layers[idx]

|

||||

# NOTE: modify here for passing args to decoder layer

|

||||

layer_outputs = decoder_layer(

|

||||

hidden_states,

|

||||

attention_mask=attention_mask,

|

||||

position_ids=position_ids,

|

||||

past_key_value=past_key_value,

|

||||

output_attentions=output_attentions,

|

||||

use_cache=use_cache,

|

||||

infer_state=infer_state,

|

||||

)

|

||||

infer_state.decode_layer_id += 1

|

||||

hidden_states = layer_outputs[0]

|

||||

|

||||

if stage_manager.is_last_stage() or stage_manager.num_stages == 1:

|

||||

hidden_states = self.norm(hidden_states)

|

||||

|

||||

# update indices

|

||||

# infer_state.block_loc[:, infer_state.max_len_in_batch-1] = infer_state.total_token_num + torch.arange(0, batch_size, dtype=torch.int32, device="cuda")

|

||||

infer_state.start_loc += torch.arange(0, batch_size, dtype=torch.int32, device="cuda")

|

||||

infer_state.seq_len += 1

|

||||

infer_state.max_len_in_batch += 1

|

||||

|

||||

# if not return_dict:

|

||||

# return tuple(v for v in [hidden_states, next_cache, all_hidden_states, all_self_attns] if v is not None)

|

||||

|

||||

# return BaseModelOutputWithPast(

|

||||

# last_hidden_state=hidden_states,

|

||||

# past_key_values=next_cache,

|

||||

# hidden_states=all_hidden_states,

|

||||

# attentions=all_self_attns,

|

||||

# )

|

||||

return {"hidden_states": hidden_states}

|

||||

|

||||

@staticmethod

|

||||

def llama_decoder_layer_forward(

|

||||

self: LlamaDecoderLayer,

|

||||

hidden_states: torch.Tensor,

|

||||

attention_mask: Optional[torch.Tensor] = None,

|

||||

position_ids: Optional[torch.LongTensor] = None,

|

||||

past_key_value: Optional[Tuple[torch.Tensor]] = None,

|

||||

output_attentions: Optional[bool] = False,

|

||||

use_cache: Optional[bool] = False,

|

||||

infer_state: Optional[BatchInferState] = None,

|

||||

) -> Tuple[torch.FloatTensor, Optional[Tuple[torch.FloatTensor, torch.FloatTensor]]]:

|

||||

residual = hidden_states

|

||||

|

||||

hidden_states = self.input_layernorm(hidden_states)

|

||||

# Self Attention

|

||||

hidden_states, self_attn_weights, present_key_value = self.self_attn(

|

||||

hidden_states=hidden_states,

|

||||

attention_mask=attention_mask,

|

||||

position_ids=position_ids,

|

||||

past_key_value=past_key_value,

|

||||

output_attentions=output_attentions,

|

||||

use_cache=use_cache,

|

||||

infer_state=infer_state,

|

||||

)

|

||||

|

||||

hidden_states = residual + hidden_states

|

||||

|

||||

# Fully Connected

|

||||

residual = hidden_states

|

||||

hidden_states = self.post_attention_layernorm(hidden_states)

|

||||

hidden_states = self.mlp(hidden_states)

|

||||

hidden_states = residual + hidden_states

|

||||

|

||||

outputs = (hidden_states,)

|

||||

|

||||

if output_attentions:

|

||||

outputs += (self_attn_weights,)

|

||||

|

||||

if use_cache:

|

||||

outputs += (present_key_value,)

|

||||

|

||||

return outputs

|

||||

|

||||

@staticmethod

|

||||

def llama_flash_attn_kvcache_forward(

|

||||

self: LlamaAttention,

|

||||

hidden_states: torch.Tensor,

|

||||

attention_mask: Optional[torch.Tensor] = None,

|

||||

position_ids: Optional[torch.LongTensor] = None,

|

||||

past_key_value: Optional[Tuple[torch.Tensor]] = None,

|

||||

output_attentions: bool = False,

|

||||

use_cache: bool = False,

|

||||

infer_state: Optional[BatchInferState] = None,

|

||||

) -> Tuple[torch.Tensor, Optional[torch.Tensor], Optional[Tuple[torch.Tensor]]]:

|

||||

assert use_cache is True, "use_cache should be set to True using this llama attention"

|

||||

|

||||

bsz, q_len, _ = hidden_states.size()

|

||||

|

||||

# NOTE might think about better way to handle transposed k and v

|

||||

# key_states [bs, seq_len, num_heads, head_dim/embed_size_per_head]

|

||||

# key_states_transposed [bs, num_heads, seq_len, head_dim/embed_size_per_head]

|

||||

|

||||

query_states = self.q_proj(hidden_states).view(bsz, q_len, self.num_heads, self.head_dim)

|

||||

key_states = self.k_proj(hidden_states).view(bsz, q_len, self.num_key_value_heads, self.head_dim)

|

||||

value_states = self.v_proj(hidden_states).view(bsz, q_len, self.num_key_value_heads, self.head_dim)

|

||||

|

||||

# NOTE might want to revise

|

||||

# need some way to record the length of past key values cache

|

||||

# since we won't return past_key_value_cache right now

|

||||

|

||||

cos, sin = infer_state.position_cos, infer_state.position_sin

|

||||

|

||||

llama_rotary_embedding_fwd(query_states.view(-1, self.num_heads, self.head_dim), cos, sin)

|

||||

llama_rotary_embedding_fwd(key_states.view(-1, self.num_key_value_heads, self.head_dim), cos, sin)

|

||||

|

||||

query_states = query_states.reshape(-1, self.num_heads, self.head_dim)

|

||||

key_states = key_states.reshape(-1, self.num_key_value_heads, self.head_dim)

|

||||

value_states = value_states.reshape(-1, self.num_key_value_heads, self.head_dim)

|

||||

|

||||

if infer_state.is_context_stage:

|

||||

# first token generation

|

||||

# copy key and value calculated in current step to memory manager

|

||||

copy_kv_to_mem_cache(

|

||||

infer_state.decode_layer_id,

|

||||

key_states,

|

||||

value_states,

|

||||

infer_state.context_mem_index,

|

||||

infer_state.cache_manager,

|

||||

)

|

||||

attn_output = torch.empty_like(query_states)

|

||||

|

||||

llama_triton_context_attention(

|

||||

query_states,

|

||||

key_states,

|

||||

value_states,

|

||||

attn_output,

|

||||

infer_state,

|

||||

num_key_value_groups=self.num_key_value_groups,

|

||||

)

|

||||

else:

|

||||

if infer_state.decode_is_contiguous:

|

||||

# if decode is contiguous, then we copy to key cache and value cache in cache manager directly

|

||||

cache_k = infer_state.cache_manager.key_buffer[infer_state.decode_layer_id][

|

||||

infer_state.decode_mem_start : infer_state.decode_mem_end, :, :

|

||||

]

|

||||

cache_v = infer_state.cache_manager.value_buffer[infer_state.decode_layer_id][

|

||||

infer_state.decode_mem_start : infer_state.decode_mem_end, :, :

|

||||

]

|

||||

cache_k.copy_(key_states)

|

||||

cache_v.copy_(value_states)

|

||||

else:

|

||||

# if decode is not contiguous, use triton kernel to copy key and value cache

|

||||

# k, v shape: [batch_size, num_heads, head_dim/embed_size_per_head

|

||||

copy_kv_to_mem_cache(

|

||||

infer_state.decode_layer_id,

|

||||

key_states,

|

||||

value_states,

|

||||

infer_state.decode_mem_index,

|

||||

infer_state.cache_manager,

|

||||

)

|

||||

|

||||

if HAS_LIGHTLLM_KERNEL:

|

||||

attn_output = torch.empty_like(query_states)

|

||||

llama_triton_token_attention(

|

||||

query_states, attn_output, infer_state, num_key_value_groups=self.num_key_value_groups

|

||||

)

|

||||

else:

|

||||

self.num_heads // self.num_key_value_heads

|

||||

cache_k = infer_state.cache_manager.key_buffer[infer_state.decode_layer_id]

|

||||

cache_v = infer_state.cache_manager.value_buffer[infer_state.decode_layer_id]

|

||||

|

||||

query_states = query_states.view(bsz, -1, self.num_heads, self.head_dim)

|

||||

copy_cache_k = cache_k.view(bsz, -1, self.num_key_value_heads, self.head_dim)

|

||||

copy_cache_v = cache_v.view(bsz, -1, self.num_key_value_heads, self.head_dim)

|

||||

|

||||

attn_output = flash_attn_with_kvcache(

|

||||

q=query_states,

|

||||

k_cache=copy_cache_k,

|

||||

v_cache=copy_cache_v,

|

||||

softmax_scale=1 / math.sqrt(self.head_dim),

|

||||

causal=True,

|

||||

)

|

||||

|

||||

attn_output = attn_output.view(bsz, q_len, self.hidden_size)

|

||||

|

||||

attn_output = self.o_proj(attn_output)

|

||||

|

||||

# return past_key_value as None

|

||||

return attn_output, None, None

|

||||

|

|

@ -0,0 +1,3 @@

|

|||

from .llama import LlamaModelInferPolicy

|

||||

|

||||

__all__ = ["LlamaModelInferPolicy"]

|

||||

|

|

@ -0,0 +1,142 @@

|

|||

from functools import partial

|

||||

from typing import List

|

||||

|

||||

import torch

|

||||

from torch.nn import Module

|

||||

from transformers.models.llama.modeling_llama import (

|

||||

LlamaAttention,

|

||||

LlamaDecoderLayer,

|

||||

LlamaForCausalLM,

|

||||

LlamaModel,

|

||||

LlamaRMSNorm,

|

||||

)

|

||||

|

||||

from colossalai.shardformer.policies.base_policy import ModulePolicyDescription, SubModuleReplacementDescription

|

||||

|

||||

# import colossalai

|

||||

from colossalai.shardformer.policies.llama import LlamaForCausalLMPolicy

|

||||

|

||||

from ..modeling._utils import init_to_get_rotary

|

||||

from ..modeling.llama import LlamaInferenceForwards

|

||||

|

||||

try:

|

||||

from colossalai.kernel.triton import rmsnorm_forward

|

||||

|

||||

HAS_TRITON_RMSNORM = True

|

||||

except:

|

||||

print("you should install triton from https://github.com/openai/triton")

|

||||

HAS_TRITON_RMSNORM = False

|

||||

|

||||

|

||||

def get_triton_rmsnorm_forward():

|

||||

if HAS_TRITON_RMSNORM:

|

||||

|

||||

def _triton_rmsnorm_forward(self: LlamaRMSNorm, hidden_states: torch.Tensor):

|

||||

return rmsnorm_forward(hidden_states, self.weight.data, self.variance_epsilon)

|

||||

|

||||

return _triton_rmsnorm_forward

|

||||

else:

|

||||

return None

|

||||

|

||||

|

||||

class LlamaModelInferPolicy(LlamaForCausalLMPolicy):

|

||||

def __init__(self) -> None:

|

||||

super().__init__()

|

||||

|

||||

def module_policy(self):

|

||||

policy = super().module_policy()

|

||||

|

||||

if self.shard_config.inference_gptq:

|

||||

from colossalai.inference.quant.gptq.cai_gptq import ColCaiQuantLinear, RowCaiQuantLinear

|

||||

|

||||

decoder_attribute_replacement = {

|

||||

"self_attn.hidden_size": self.model.config.hidden_size // self.shard_config.tensor_parallel_size,

|

||||

"self_attn.num_heads": self.model.config.num_attention_heads // self.shard_config.tensor_parallel_size,

|

||||

}

|

||||

policy[LlamaDecoderLayer] = ModulePolicyDescription(

|

||||

attribute_replacement=decoder_attribute_replacement,

|

||||

sub_module_replacement=[

|

||||

SubModuleReplacementDescription(

|

||||

suffix="self_attn.q_proj",

|

||||

target_module=ColCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="self_attn.k_proj",

|

||||

target_module=ColCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="self_attn.v_proj",

|

||||

target_module=ColCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="self_attn.o_proj",

|

||||

target_module=RowCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="mlp.gate_proj",

|

||||

target_module=ColCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="mlp.up_proj",

|

||||

target_module=ColCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

SubModuleReplacementDescription(

|

||||

suffix="mlp.down_proj",

|

||||

target_module=RowCaiQuantLinear,

|

||||

kwargs={"split_num": 1},

|

||||

),

|

||||

],

|

||||

)

|

||||

|

||||

self.shard_config._infer()

|

||||

|

||||

infer_forward = LlamaInferenceForwards.llama_model_forward

|

||||

method_replacement = {"forward": partial(infer_forward)}

|

||||

self.append_or_create_method_replacement(description=method_replacement, policy=policy, target_key=LlamaModel)

|

||||

|

||||

infer_forward = LlamaInferenceForwards.llama_decoder_layer_forward

|

||||

method_replacement = {"forward": partial(infer_forward)}

|

||||

self.append_or_create_method_replacement(

|

||||

description=method_replacement, policy=policy, target_key=LlamaDecoderLayer

|

||||

)

|

||||

|

||||

infer_forward = LlamaInferenceForwards.llama_flash_attn_kvcache_forward

|

||||

method_replacement = {"forward": partial(infer_forward)}

|

||||

self.append_or_create_method_replacement(

|

||||

description=method_replacement, policy=policy, target_key=LlamaAttention

|

||||

)

|

||||

|

||||

if self.pipeline_stage_manager:

|

||||

# set None as default

|

||||

self.set_pipeline_forward(

|

||||

model_cls=LlamaForCausalLM, new_forward=LlamaInferenceForwards.llama_causal_lm_forward, policy=policy

|

||||

)

|

||||

infer_forward = None

|

||||

if HAS_TRITON_RMSNORM:

|

||||

infer_forward = get_triton_rmsnorm_forward()

|

||||

|

||||

if infer_forward is not None:

|

||||

method_replacement = {"forward": partial(infer_forward)}

|

||||

self.append_or_create_method_replacement(

|

||||

description=method_replacement, policy=policy, target_key=LlamaRMSNorm

|

||||

)

|

||||

|

||||

return policy

|

||||

|

||||

def postprocess(self):

|

||||

init_to_get_rotary(self.model.model)

|

||||

return self.model

|

||||

|

||||

def get_held_layers(self) -> List[Module]:

|

||||

"""Get pipeline layers for current stage."""

|

||||

stage_manager = self.pipeline_stage_manager

|

||||

held_layers = super().get_held_layers()

|

||||

if stage_manager.is_first_stage():

|

||||

held_layers.append(self.model.lm_head)

|

||||

return held_layers

|

||||

|

|

@ -0,0 +1,4 @@

|

|||

from .cai_gptq import HAS_AUTO_GPTQ

|

||||

|

||||

if HAS_AUTO_GPTQ:

|

||||

from .cai_gptq import CaiGPTQLinearOp, CaiQuantLinear

|

||||

|

|

@ -0,0 +1,14 @@

|

|||

import warnings

|

||||

|

||||

HAS_AUTO_GPTQ = False

|

||||

try:

|

||||

import auto_gptq

|

||||

|

||||

HAS_AUTO_GPTQ = True

|

||||

except ImportError:

|

||||

warnings.warn("please install auto-gptq from https://github.com/PanQiWei/AutoGPTQ")

|

||||

HAS_AUTO_GPTQ = False

|

||||

|

||||

if HAS_AUTO_GPTQ:

|

||||

from .cai_quant_linear import CaiQuantLinear, ColCaiQuantLinear, RowCaiQuantLinear

|

||||

from .gptq_op import CaiGPTQLinearOp

|

||||

|

|

@ -0,0 +1,354 @@

|

|||

# Adapted from AutoGPTQ auto_gptq: https://github.com/PanQiWei/AutoGPTQ

|

||||

|

||||

import math

|

||||

import warnings

|

||||

from typing import List, Union

|

||||

|

||||

import numpy as np

|

||||

import torch

|

||||

import torch.distributed as dist

|

||||

import torch.nn as nn

|

||||

from torch.distributed import ProcessGroup

|

||||

|

||||

from colossalai.lazy import LazyInitContext

|

||||